Where is Design?

OBJECTIVE

Experimenting mixed reality with tangible interaction to improve the user experience.

DELIVERABLE

Using Unity and Arduino to build the working prototype.

Type:

Mixed Reality Prototype

Duration:

4 weeks

Skill:

Mixed Reality Prototype, Physical Computing,

Tools:

Microsoft Hololens, Unity/C#, Arduino, Laser Cut

LIMITED INPUT

TANGIBALE INPUT

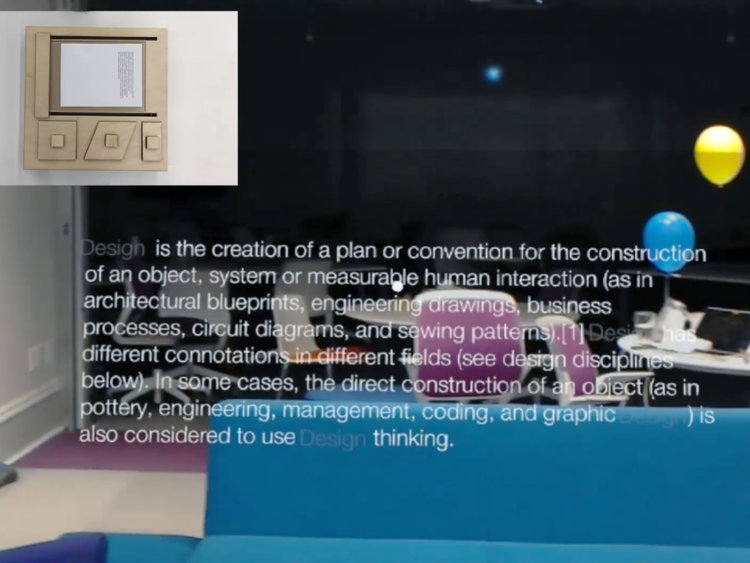

Take one of the three tokens (bold/italic/condense) on the box, the font style in the mixed reality will change accordingly.

01

Design the input

This project focuses on kinetic typeface. Since I am working on the tangible input. I need to think about what is the proper metaphor for input device?

There are several iterations of the input device. Eventually I decide to use shape rather than symbols to represent the font style.

02

Construct the system

I chose to use Particle Photon which provides built-in web service. When there is an event triggered, Photon will send it to its cloud server. Hololens should keep listening to the web server and wait for event. Below is the system diagram.

03

DEMO & TEST

A short presentation and Demo were held in the last class. Users' feedback was positive. They really liked the idea of tangible input and kept pushing me forward to other possibilities.

04

WHAT I LEARNED & NEXT STEPS

This was the first time I learned and used Unity. With my technical background, I picked up Unity very fast and quickly figure out how to build the digital part of this project. The input device is harder to design. I went through multiple iterations before I figured out a proper way to communication font style. Tangible input is more humane but the physical form matters.

For next steps, I will keep improving my AR/VR prototyping skill and explore other possibilities of tangible inputs.