ObJECtive

Design a medical communication learning tool for medical students.

SOLUTION

ORA is an AI assistant that helps medical students grow communication skills based on the real-life conversation in the hospital.

Type:

Product Design

Duration:

3 months, 2018.01 - 2018.04

Team Members:

Jeffrey Chou, Zahin Ali, Suzanne Choi, Angela Wang

My Role:

Secondary research

Design research execution + synthesis

Design for AI data collection scheme

UX Design for data privacy

High fidelity Prototype (Smartwatch / VR)

Research Methods:

Interview, Affinity Diagram, Co-Design Workshop, Diary Study, Concept Speed dating, Usability Test

The Challenge

THE OUTCOME

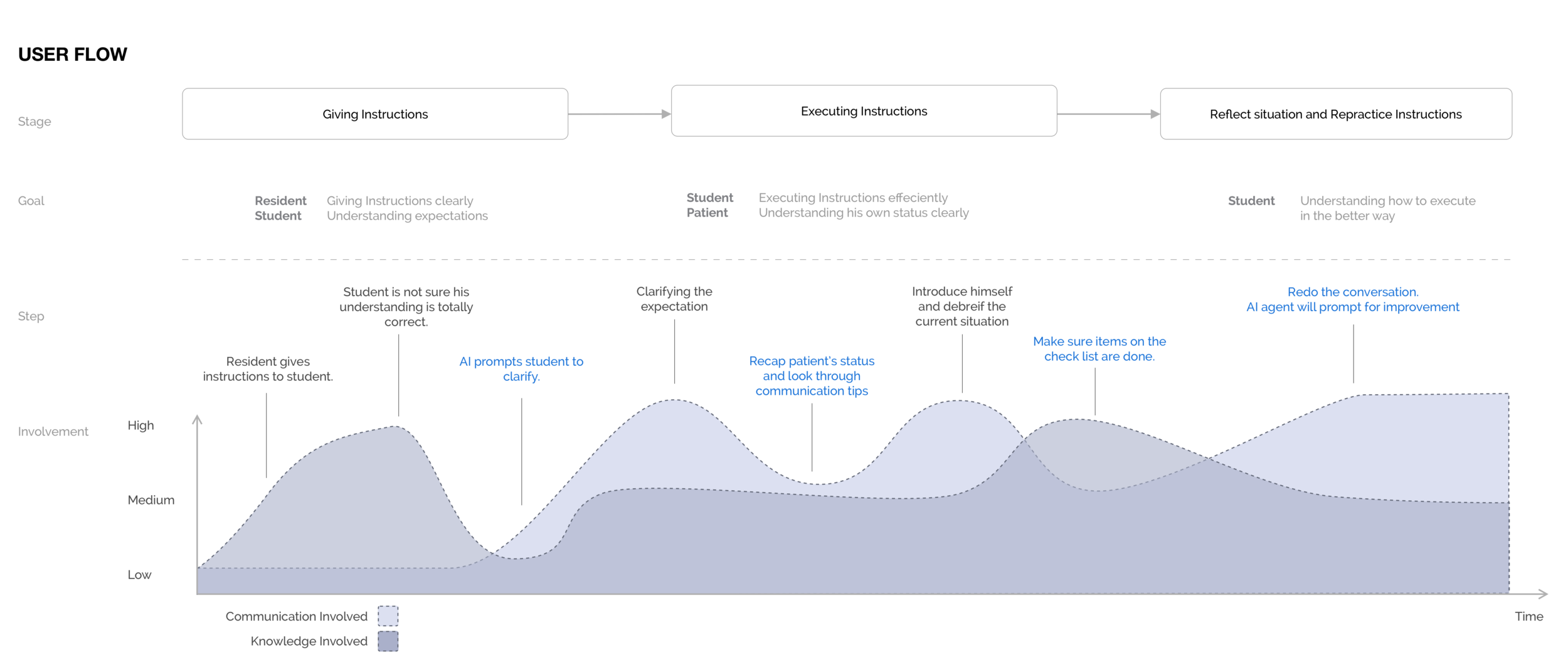

HOW ORA TEACHES

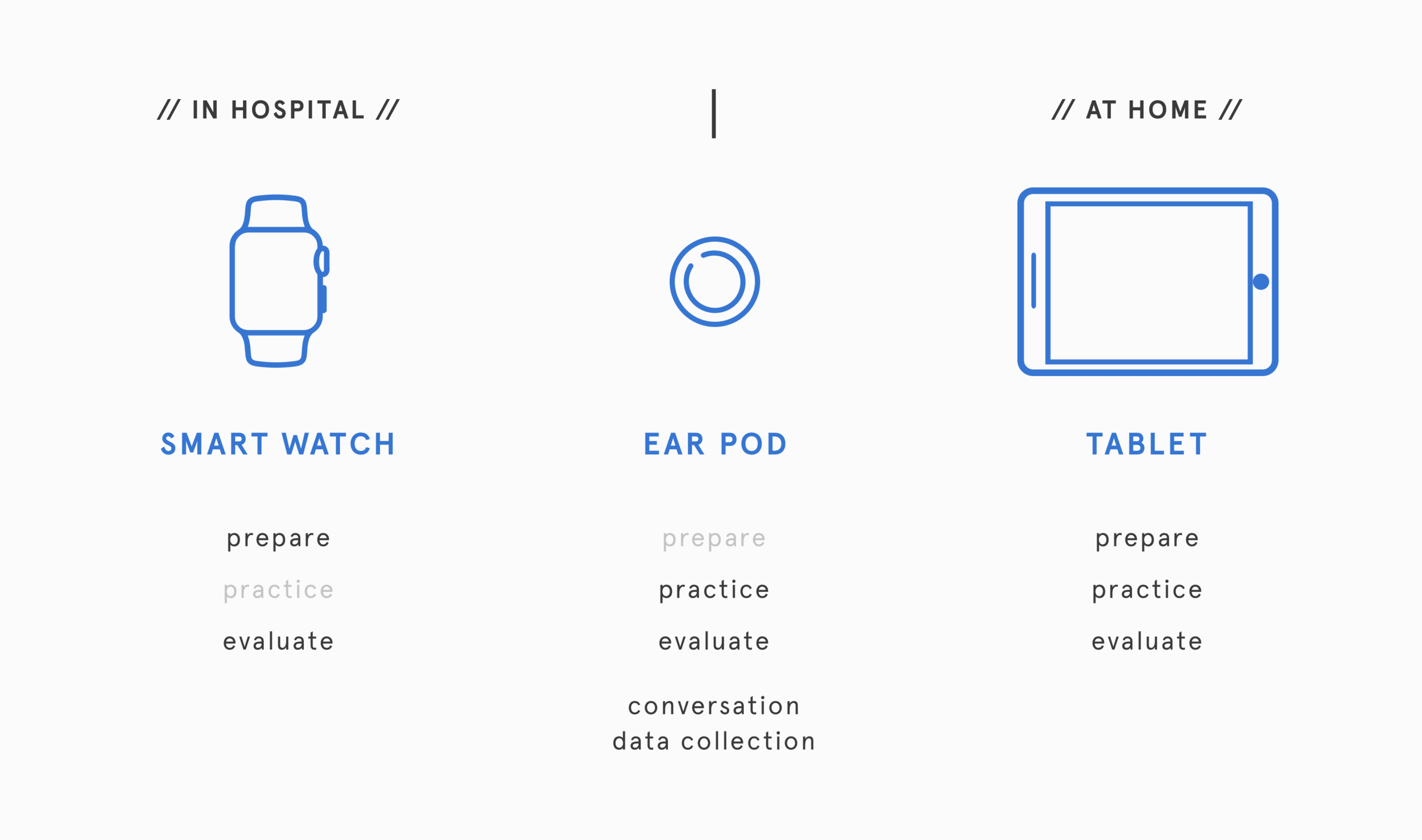

ORA's learning is built upon three devices – a smartwatch, an earpiece,and a tablet. Together, they establish the tried-and-true "prepare–practice–evaluate" learning framework that is crucial to effective communication learning.

Smart watch

The smartwatch enables hands-free access to personalized learning materials and instant feedback in the hospital.

Prepare

Before any conversation, the student can view a list of best practice items populated by Ora based on what the student have recently learned or felt short on.

Evaluate

After the conversation, Ora provides speech evaluations by topic and clarifies the specific terms and behaviors causing conversation breakdown.

Ear Bud

The earbud collects student’s conversation upon patient approval and provides access to ORA’s virtual assistant.

Practice (Hints)

During the conversation, the earbud sends out distinguishable notification for different common conversation downfalls as allows for in-moment correction without major interruption.

click to play voice notification

Evaluate

ORA’s voice assistant offers detailed feedback on any past conversation and creates the student’s learning schedule upon request.

Tablet

The tablet is the principle learning tool for any at-home learning.

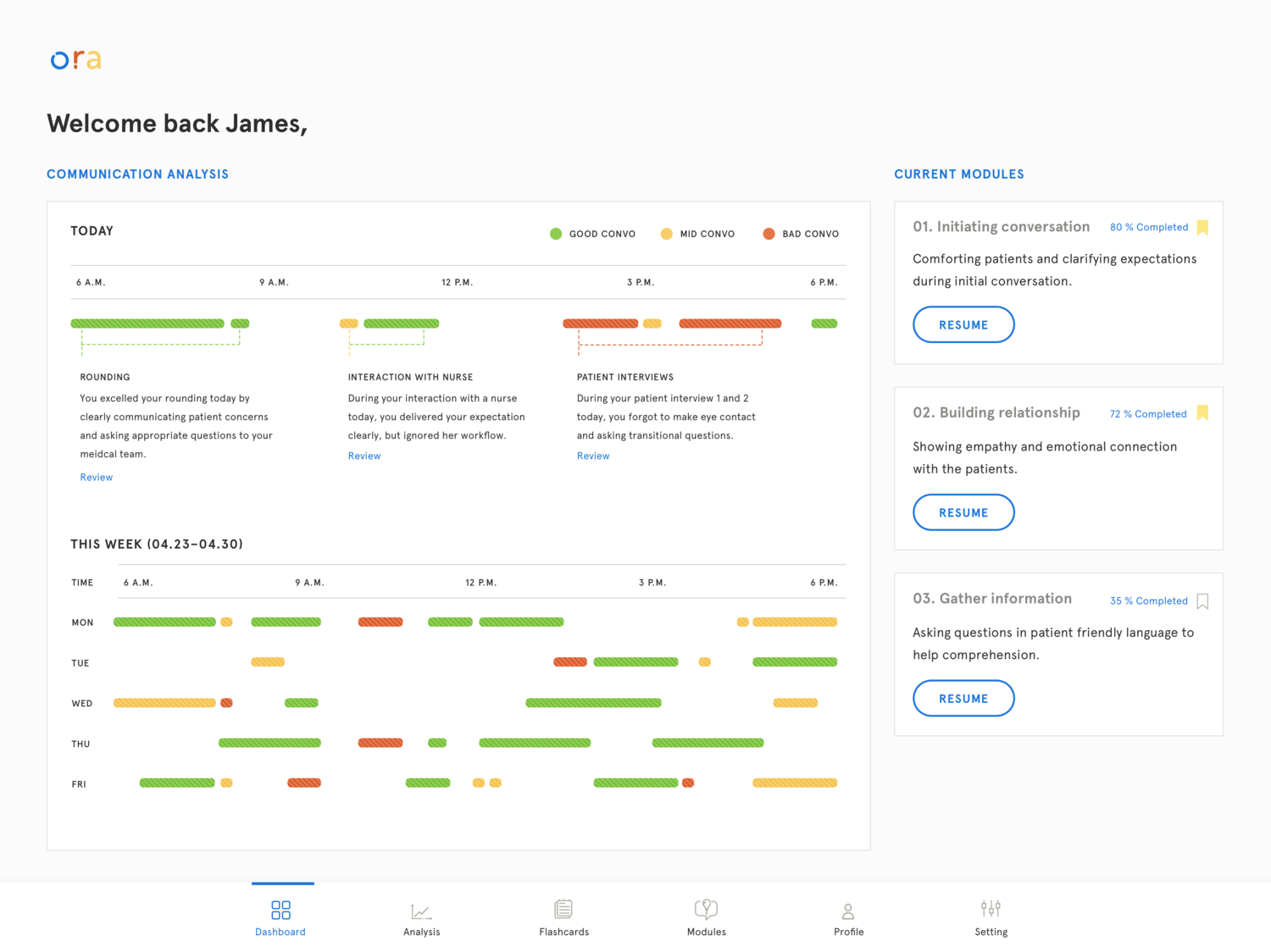

Evaluate: Growth

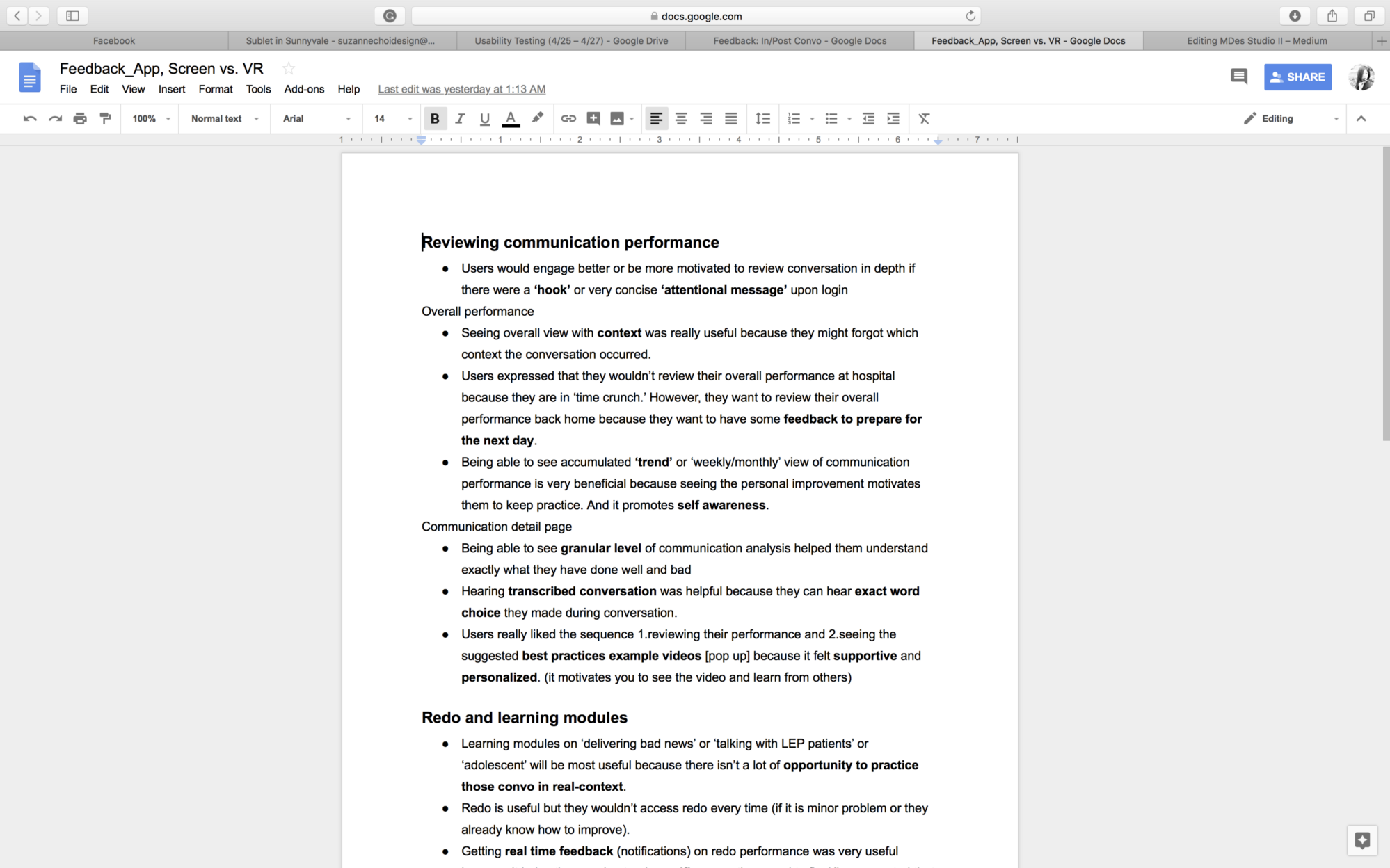

Upon turning on the tablet, the student first sees visualizations of communication growth trajectory which helps the student better identify communication strengths and weaknesses.

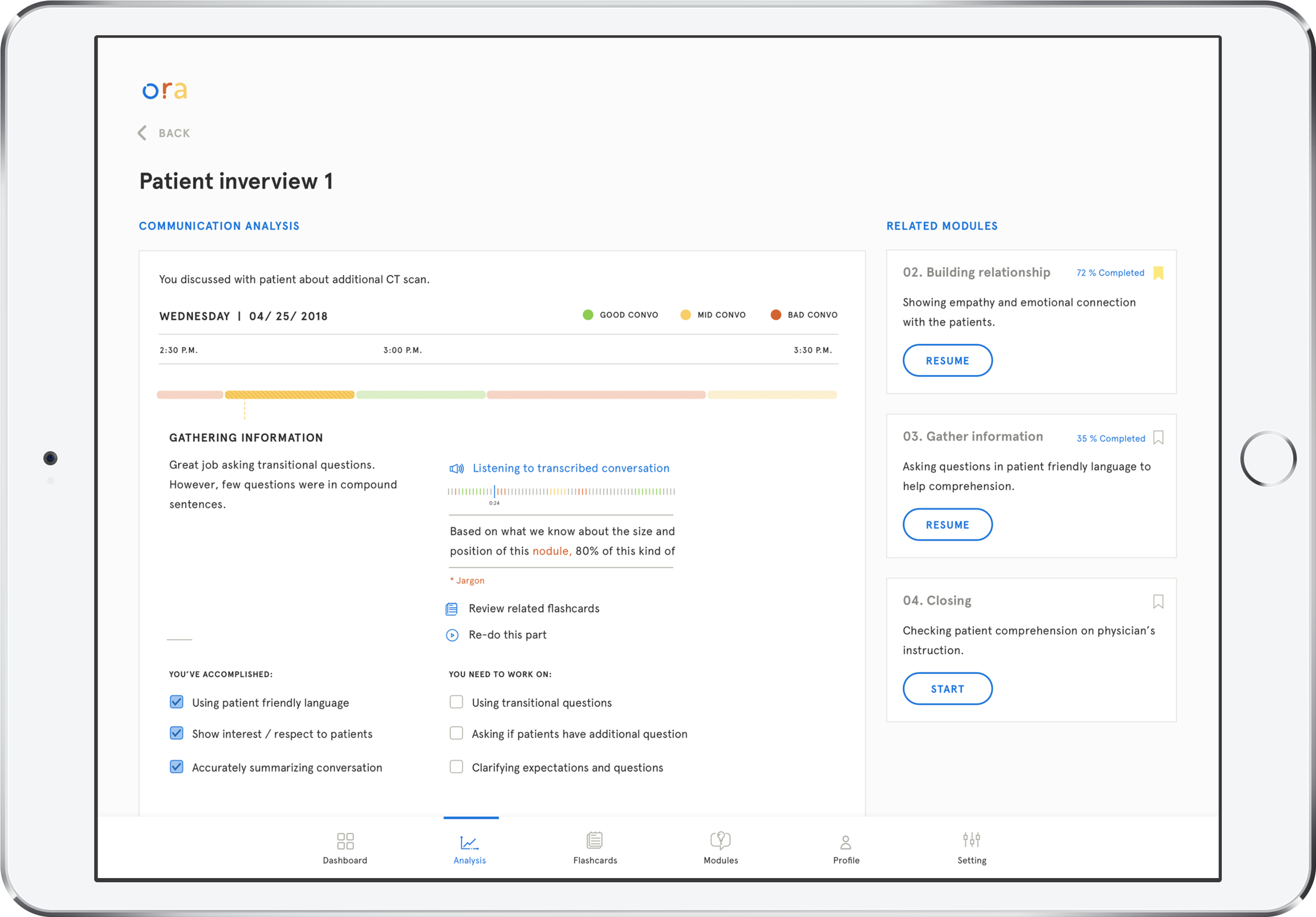

Evaluate: Conversation

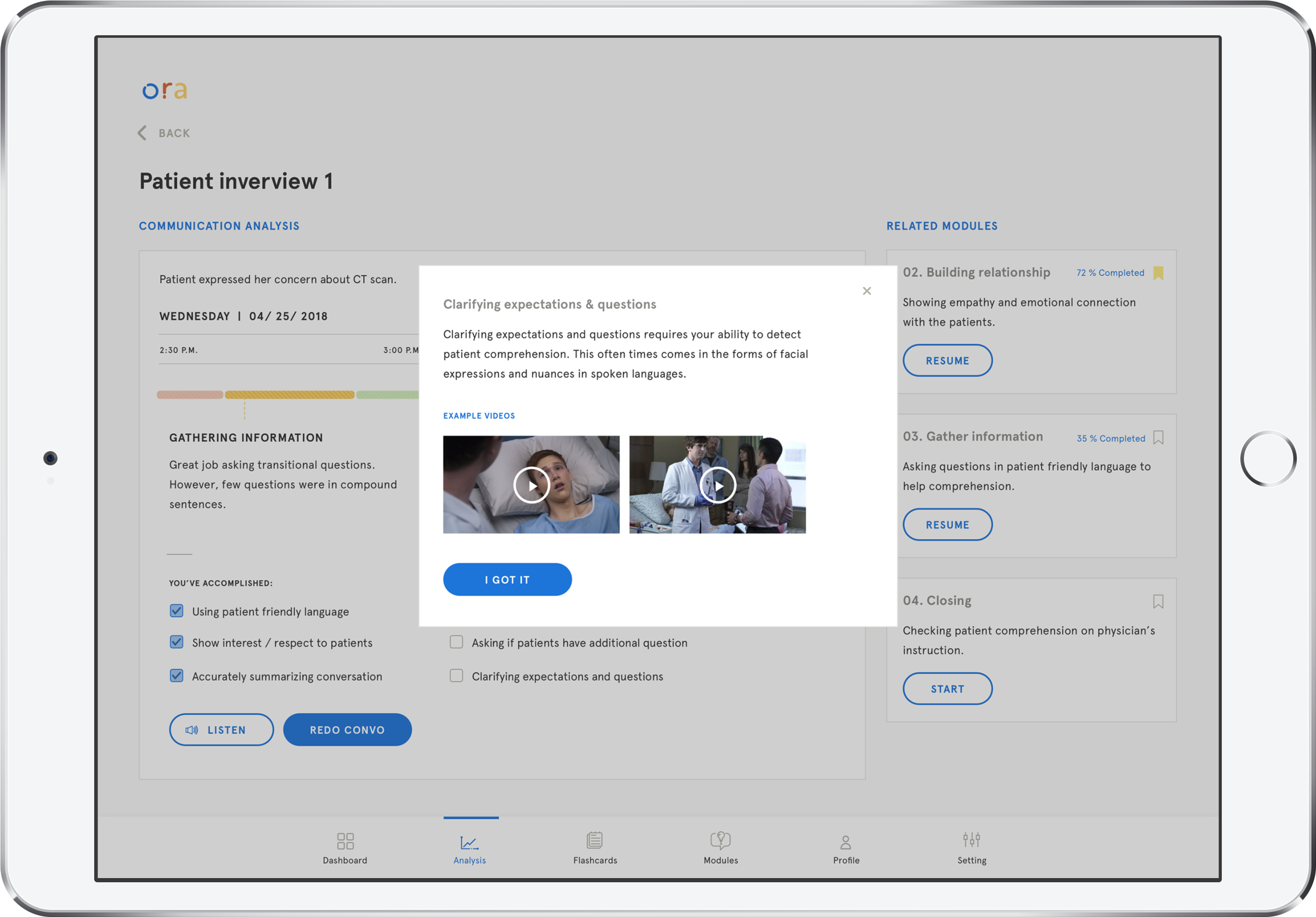

ORA provides a detailed analysis of each conversation segment by topic and contains transcripts of the full conversation with the patient’s information anonymized.

Along with detailed analysis, ORA also provides recommended best practices and how-to videos to help the student better understand how he can improve.

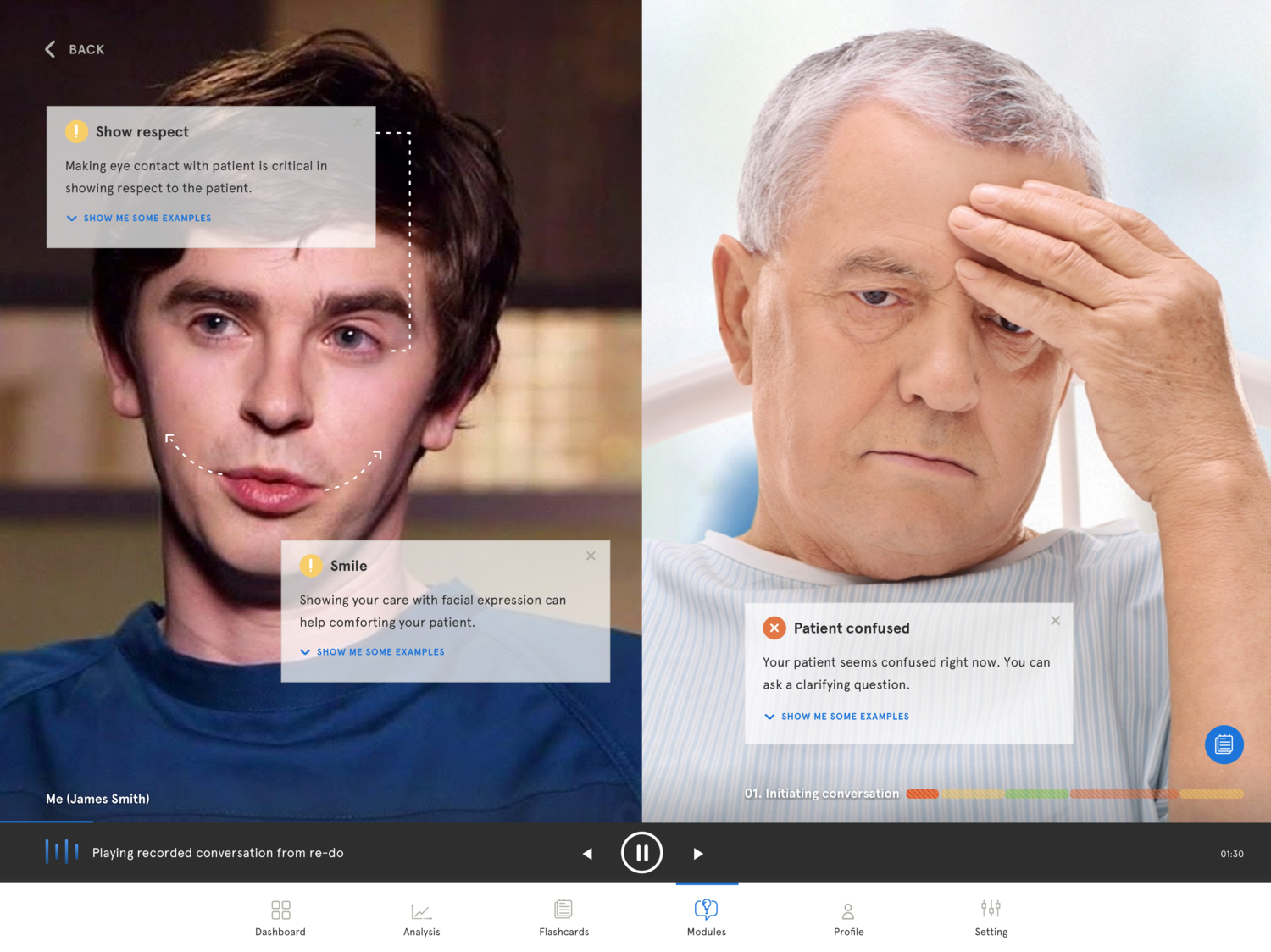

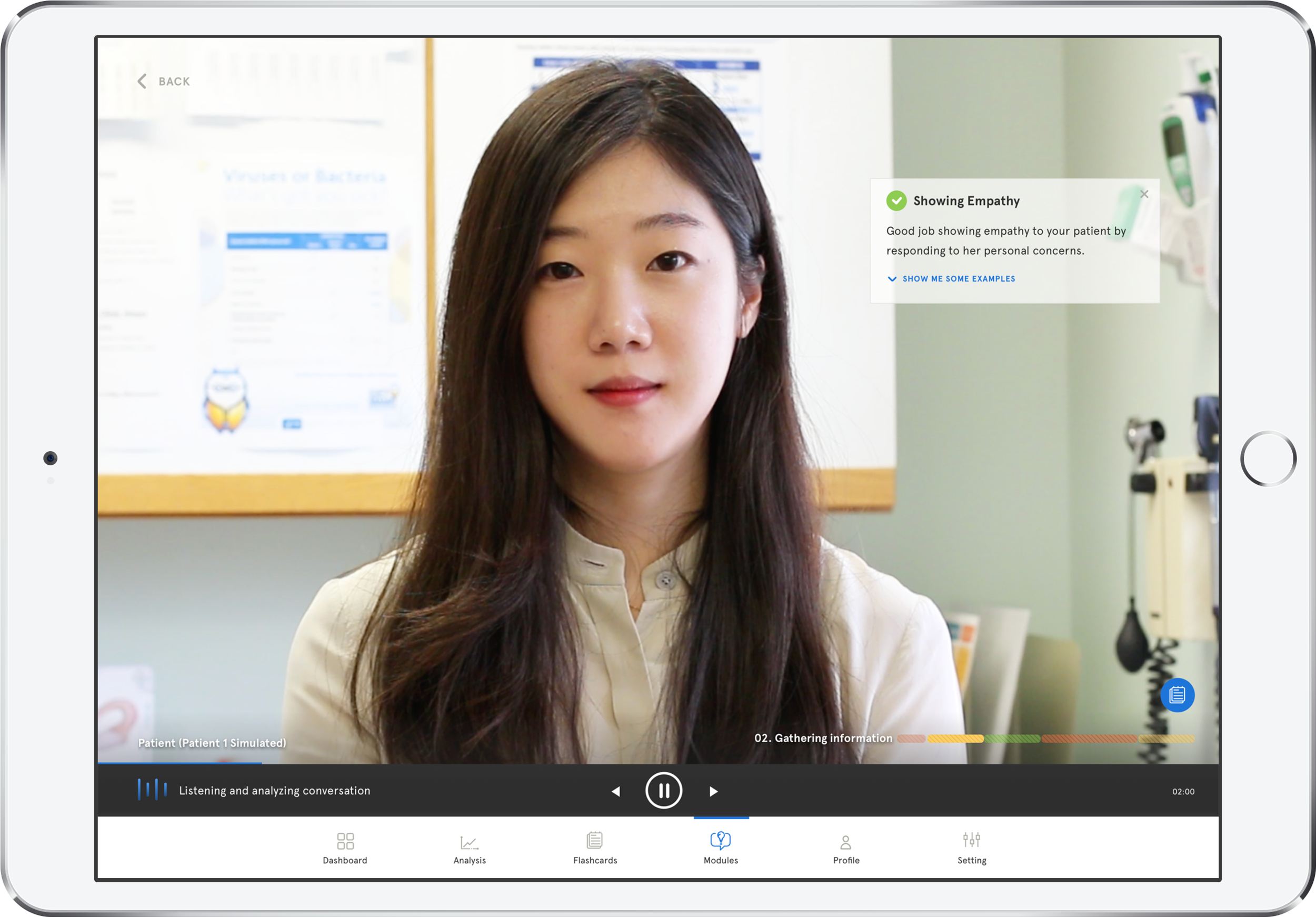

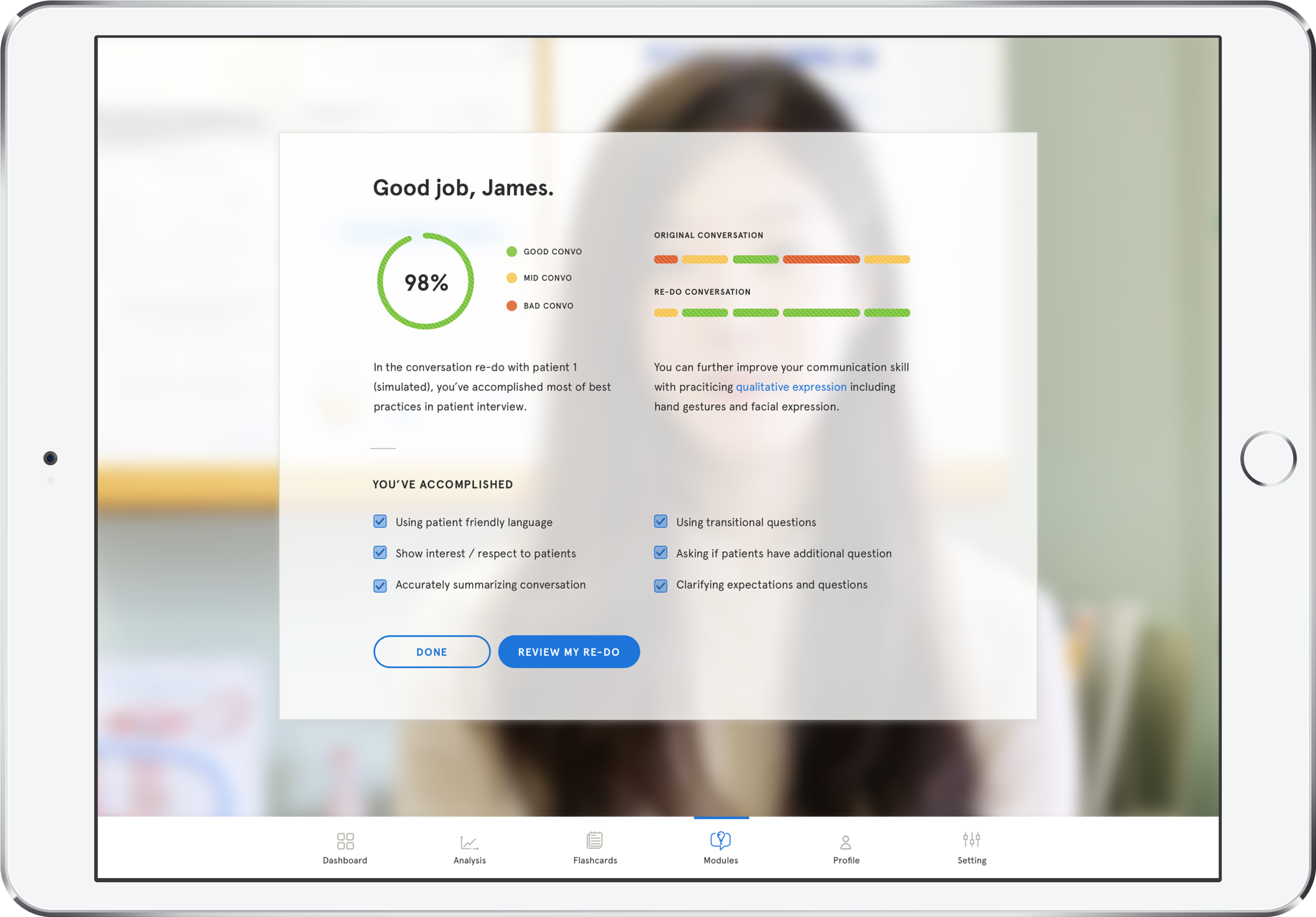

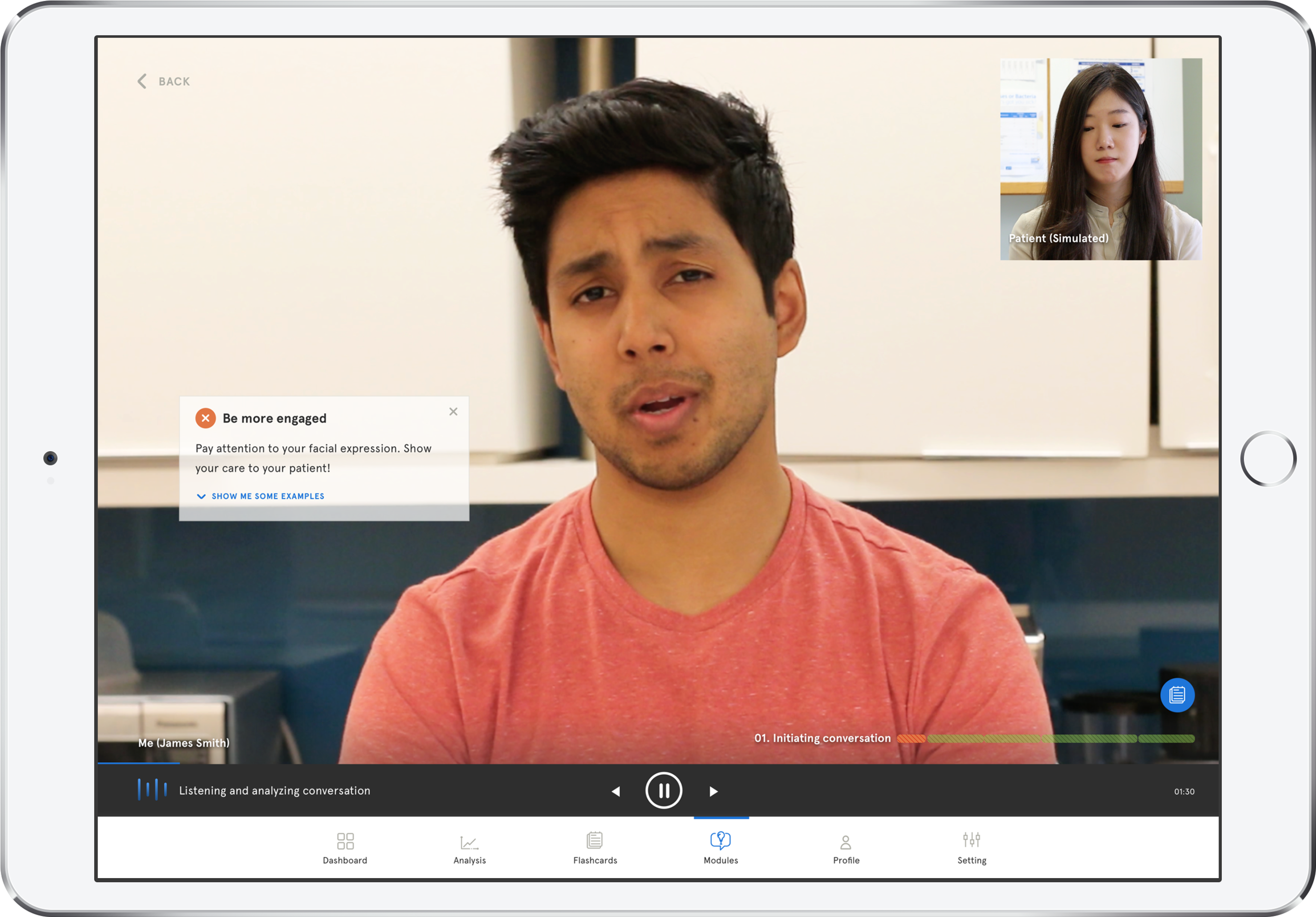

Practice: Virtual Agent

The student may re-do any conversation segment and get in-moment feedback of any breakdowns and corrections. A patient avatar is used to remove any personal identifier and protect patient privacy.

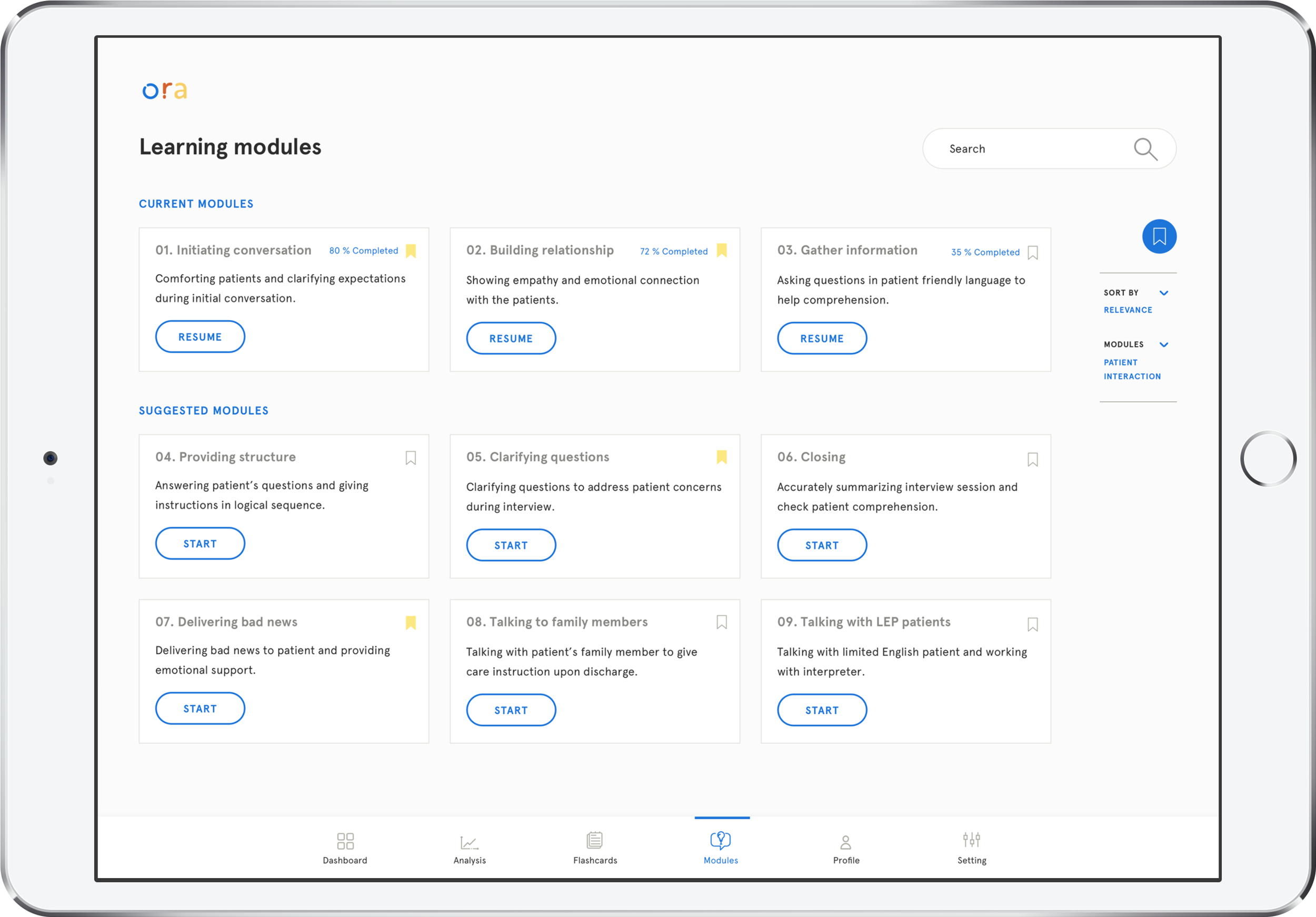

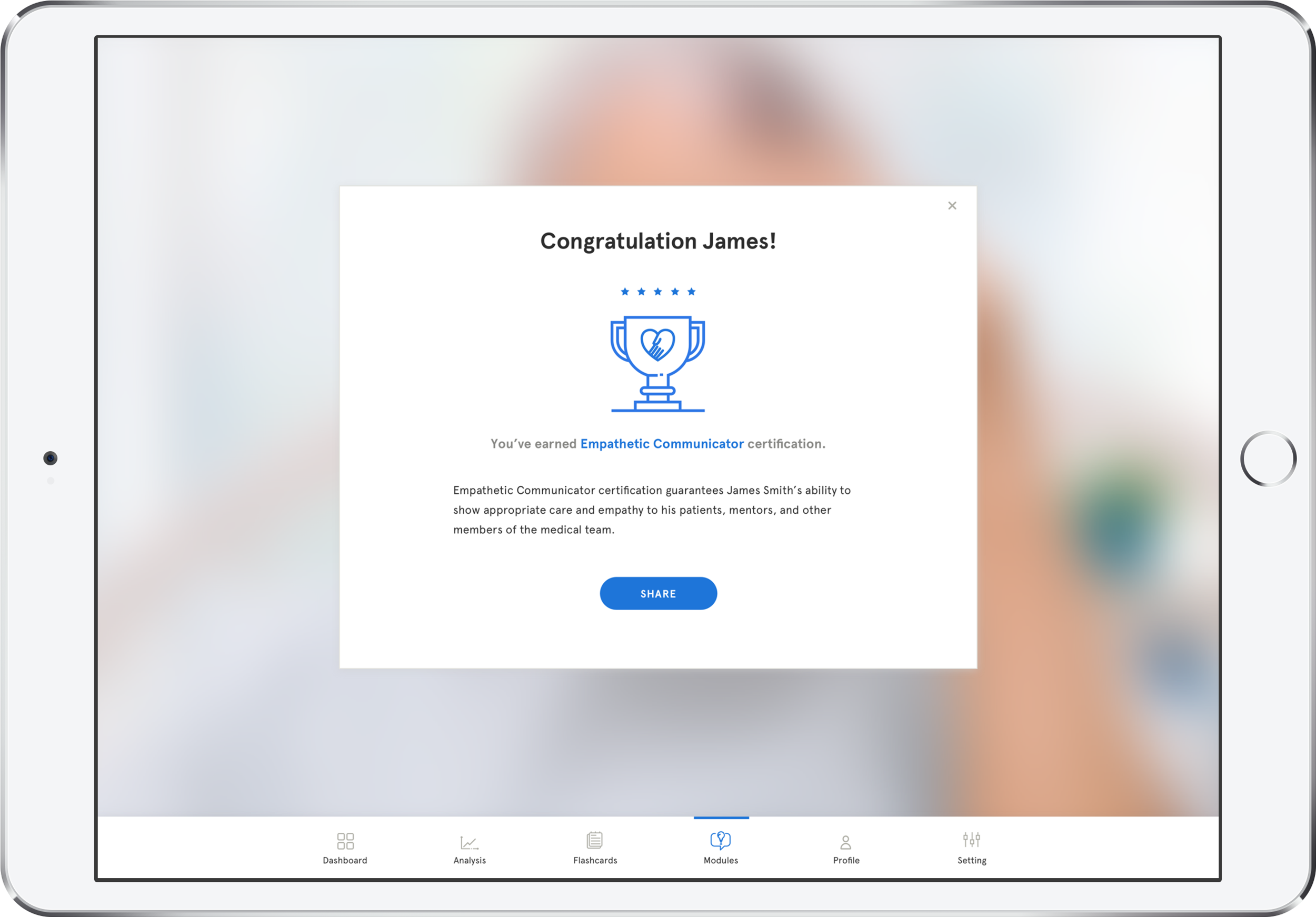

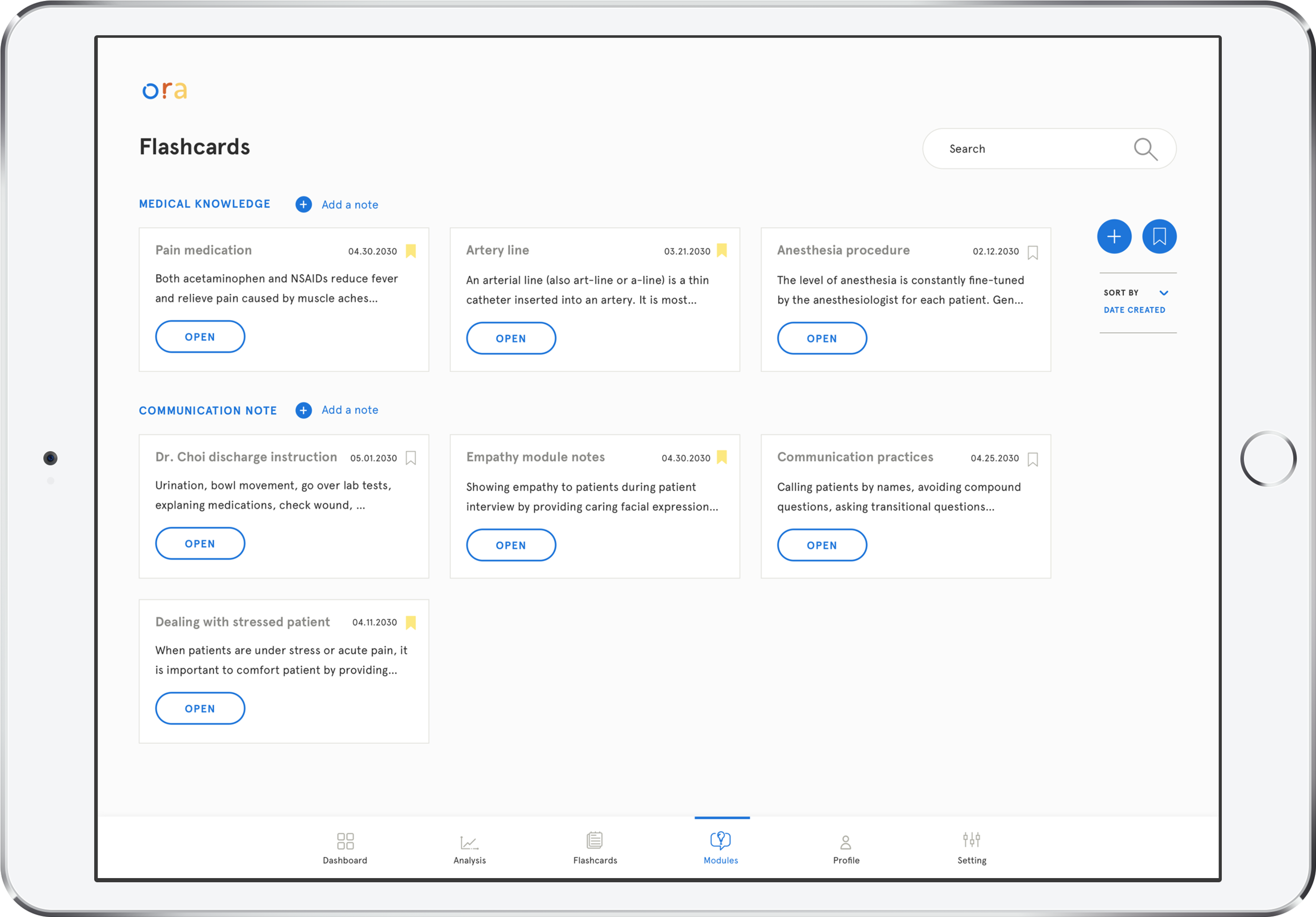

Prepare: Module

ORA leverages existing teaching resources and techniques to provide learning modules for different communication situations. Upon completion of a series of learning modules, the student may earn certifications to showcase different skills to future employers. The student may save different flashcards/notes within the ORA platform to facilitate his learning.

How ORA learns to teach

ORA's AI is built upon natural language processing training and further trained by existing communication scripts used in the education context.

As medical students use ORA, it uses students' real conversation and feedback to identify communication breakdown better and suggest useful tips.

With augmented infrastructure, ORA may include video conversations and patient feedback.

How I got here?

For detailed design process, please see our final presentation.

DESIGN PROCESS

01

SCOPING FRAMEWORK

Territory Map

We started this project by creating the territory map of healthcare to identify opportunity areas. We put the patients at the center of this diagram because of their frequent interactions with stakeholder.

As our research progressed, we further focused on medical students and their communication learning.

02

EARLY Exploratory Research

Interview & Survey

We had conducted 16 interviews and got 11 online survey responses. Interviewees include medical students, doctors, residents, designers in the medical industry, technical specialist, and computer science professors.

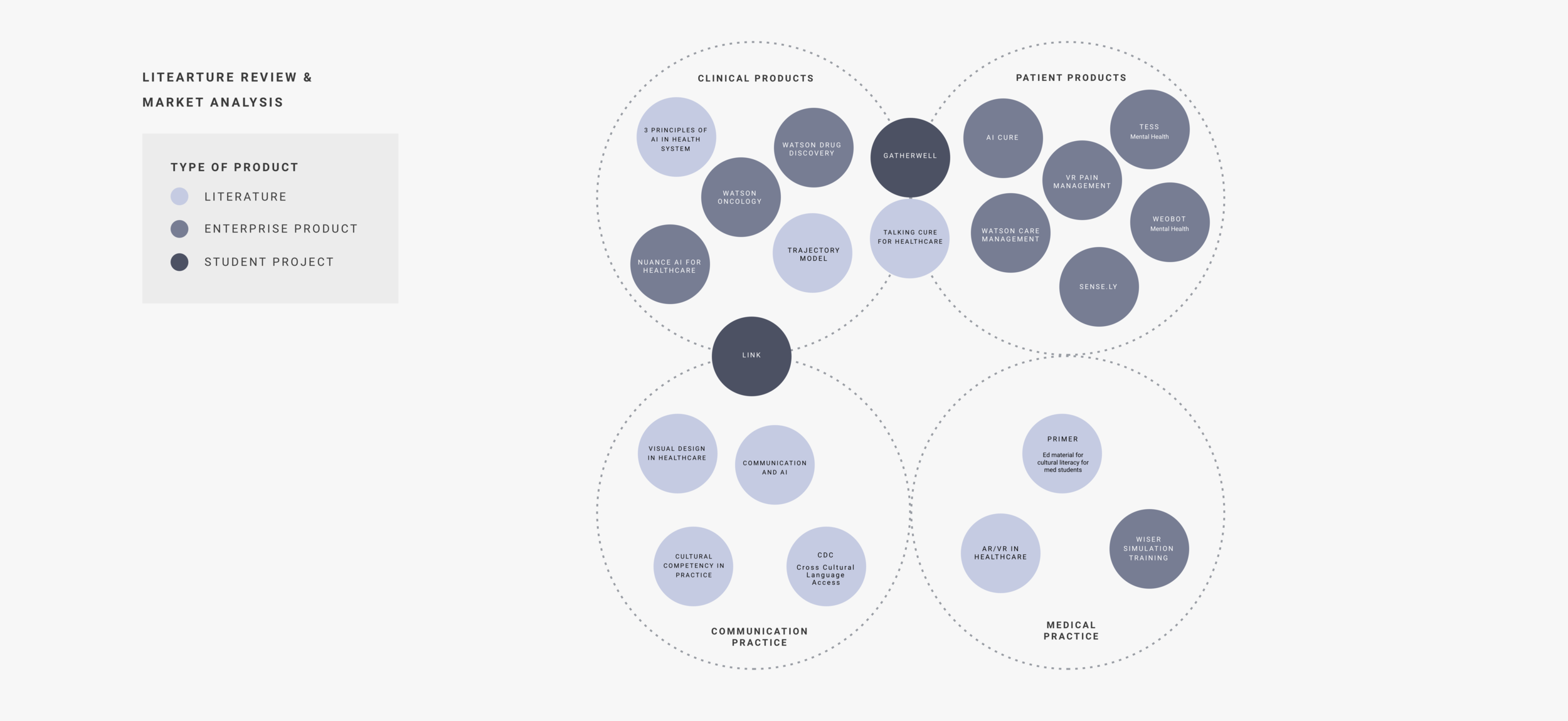

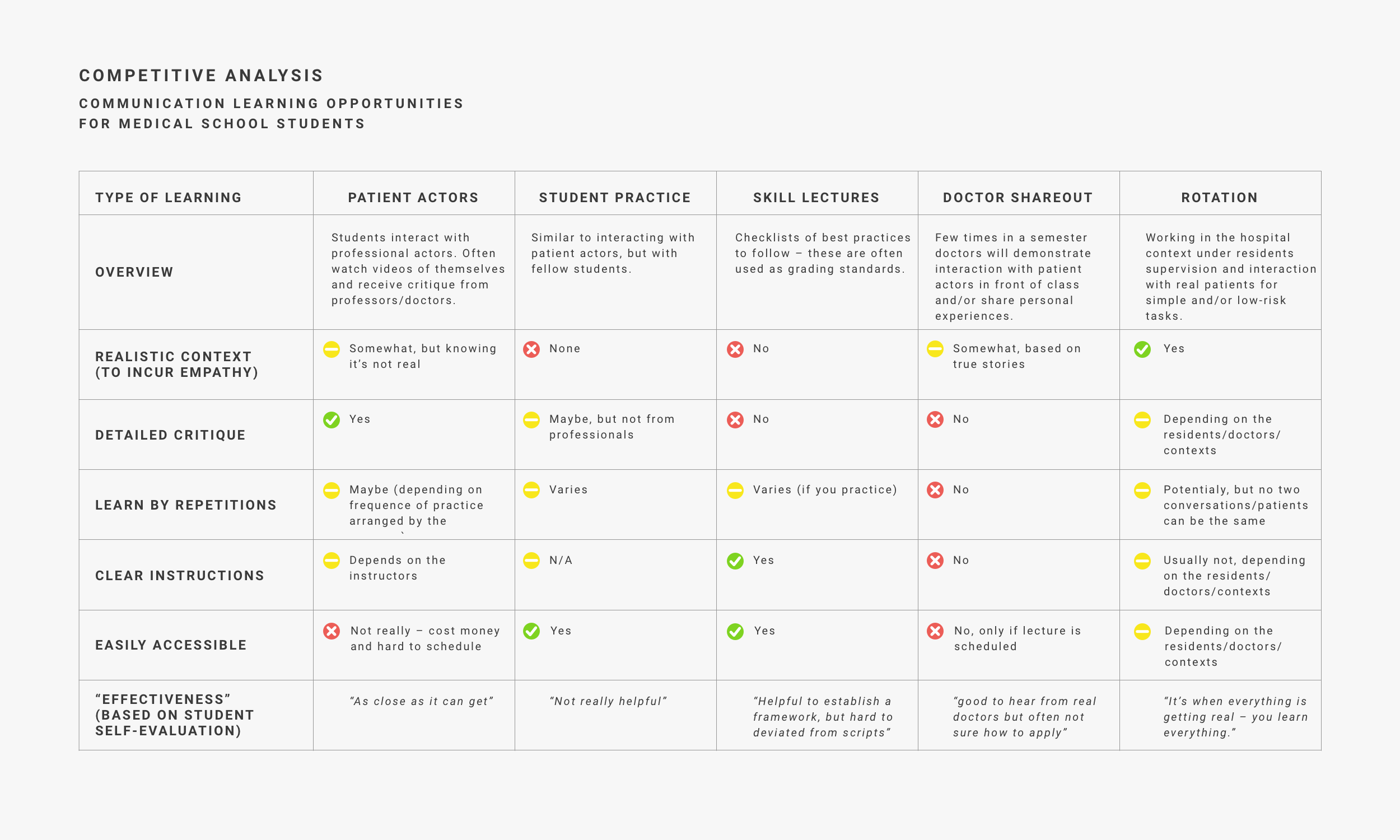

Literature Review & Market Analysis

We also conducted in-depth secondary research to understand the recent design and technology trend in healthcare. Five insights were identified and included in the synthesis.

Insights from current available solutions

1. Doctors or patients

Existing interventions are often designed for either doctors or patients, rarely both.

2. Tech generation gap

There is a technology generation gap between experienced doctors and new doctors

3. AR/VR potential

AR/VR technology is currently limited to surgical training. There is tremendous potential in utilizing AR/VR to facilitate communicative learning

4. Personalization

Products enabling personalization, such as providing information catered to specific individual needs and translation for LEP patients, are well received.

5. No platform for caregivers

There is no formal learning platform designed for caregivers.

Research Synthesis

The synthesis was a rigorous 3-step process that started with a wall of "insights" extracted from exploratory research, ran through affinity mapping for clustering and then builds design principles from each cluster.

After the synthesis we were able to further scope our project objective and design principles.

03

Focused ExploratorY &

Early Generative Stage

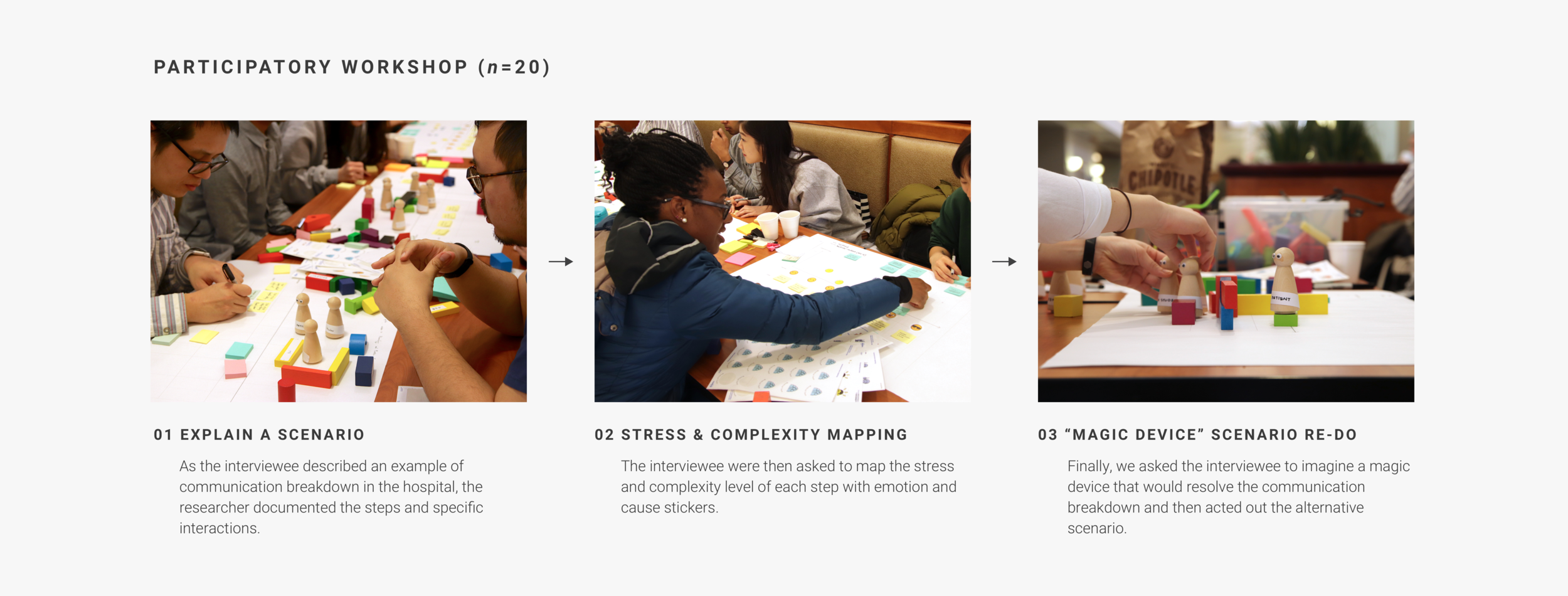

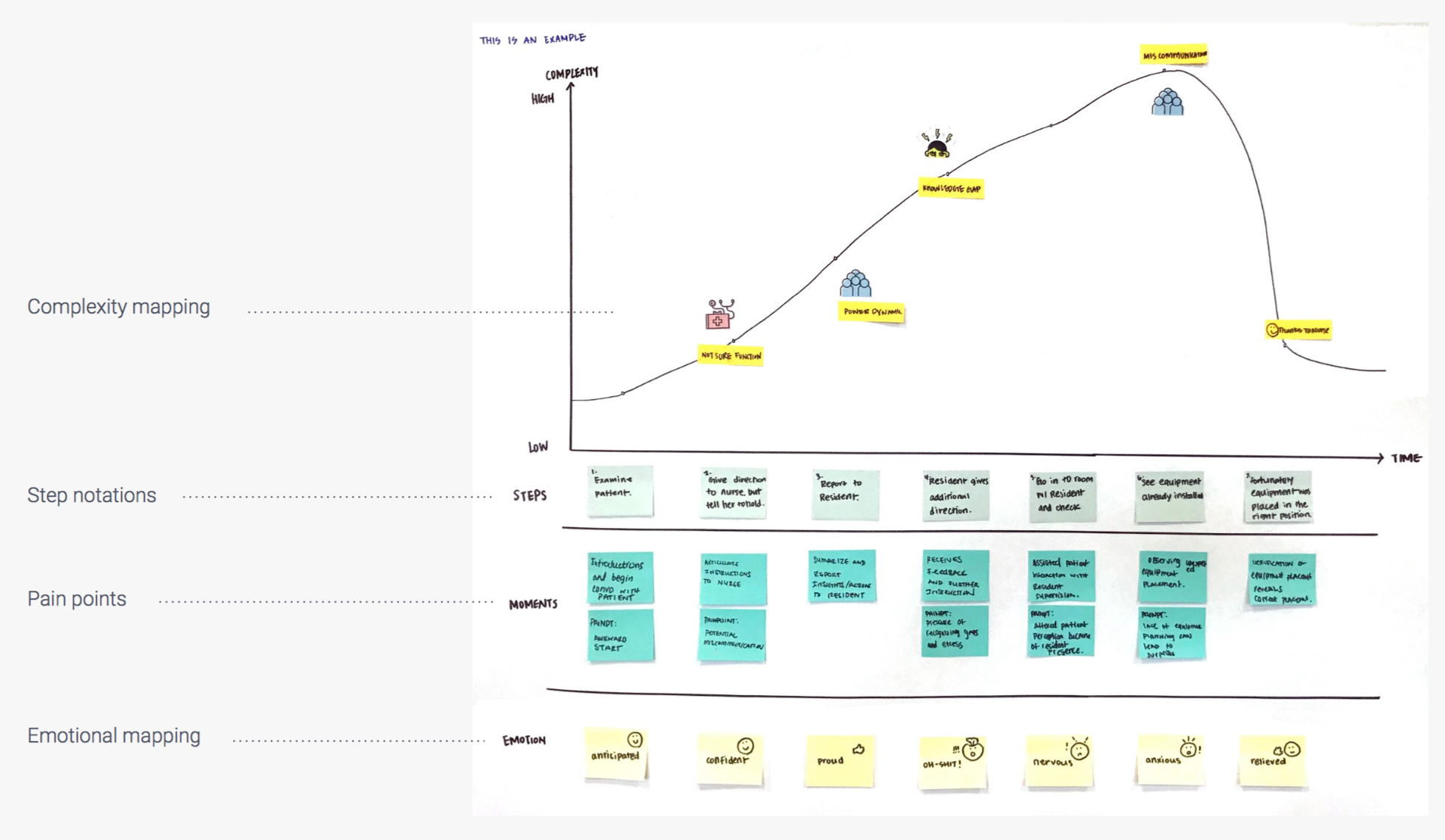

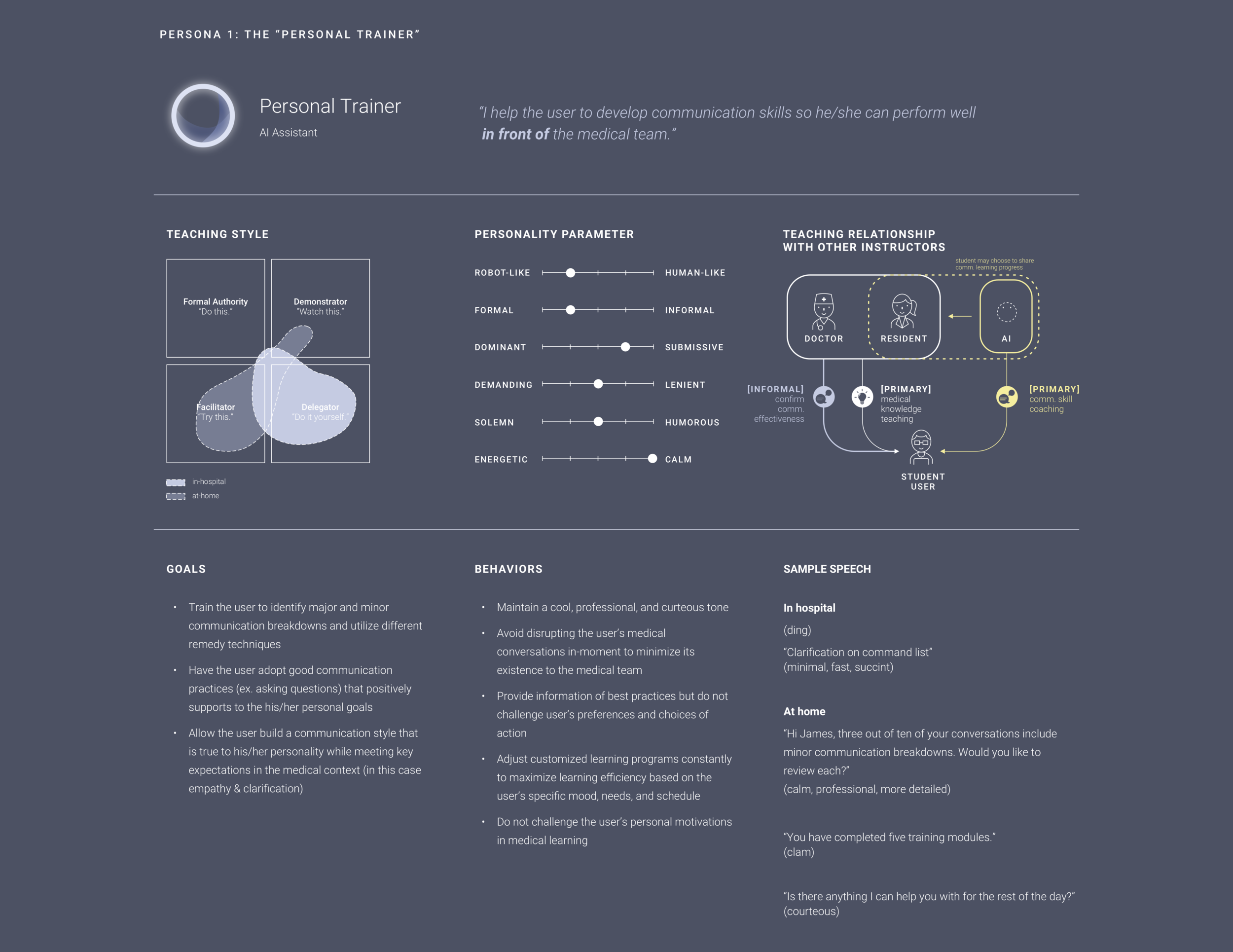

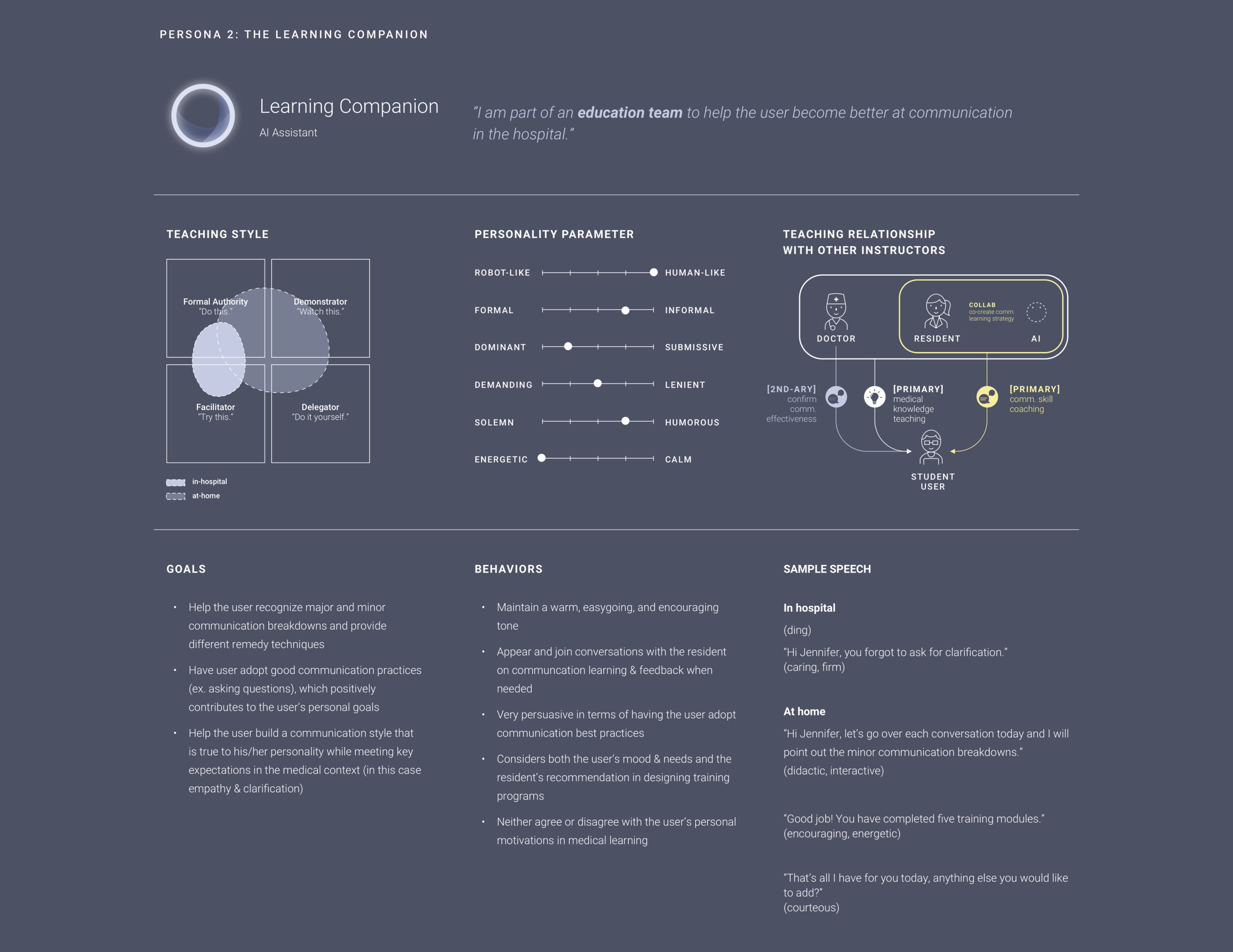

In this stage, we have conducted a participatory workshop and text-based diary study to understand more details about their communication learning and break down. We built 2 medical student personas and 2 AI personas out of the result.

Participatory

Workshop

We launched a participatory workshop at UPMC (University of Pittsburgh Medical Center) to understand where communication breakdown happens in hospital daily activities. The workshop has 3 stages:

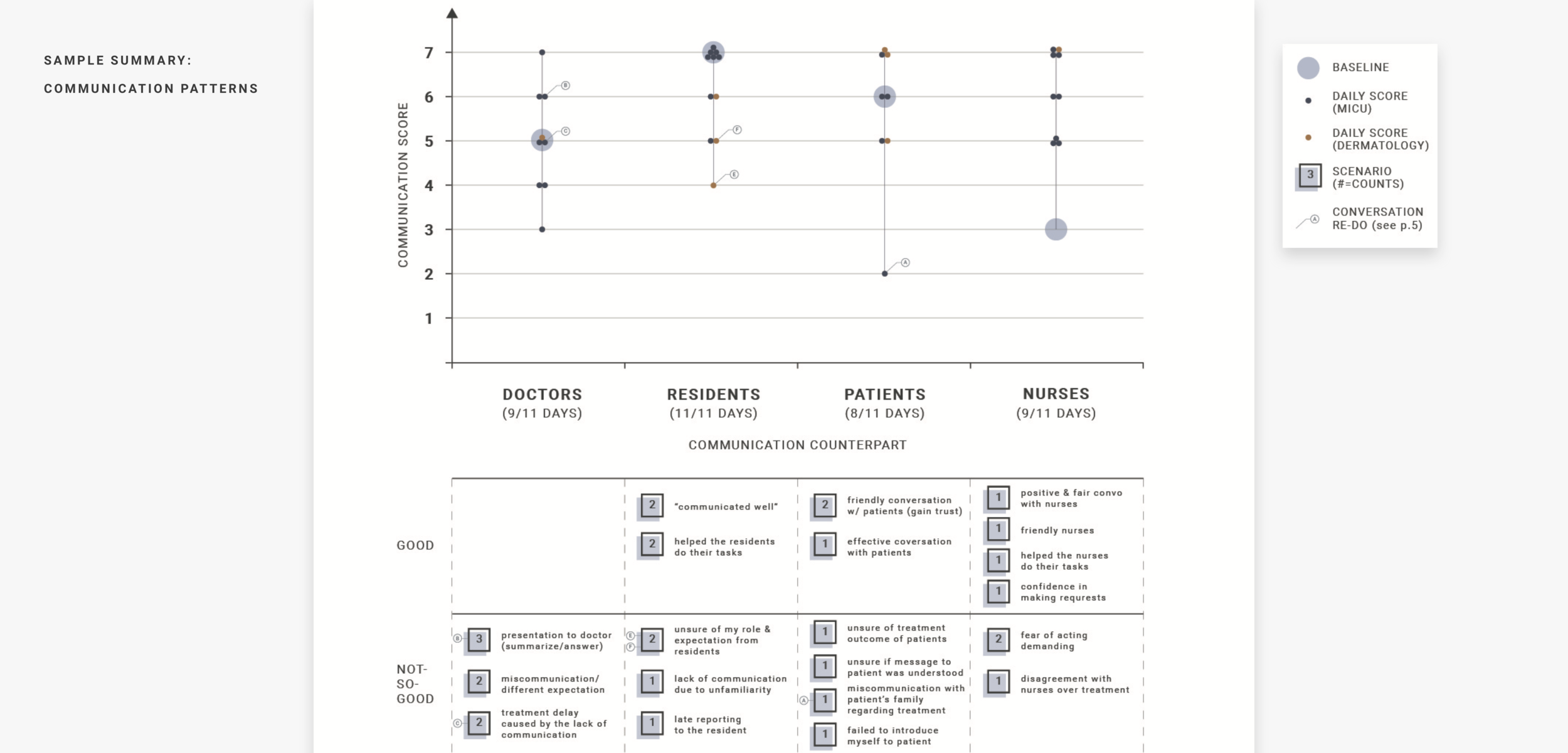

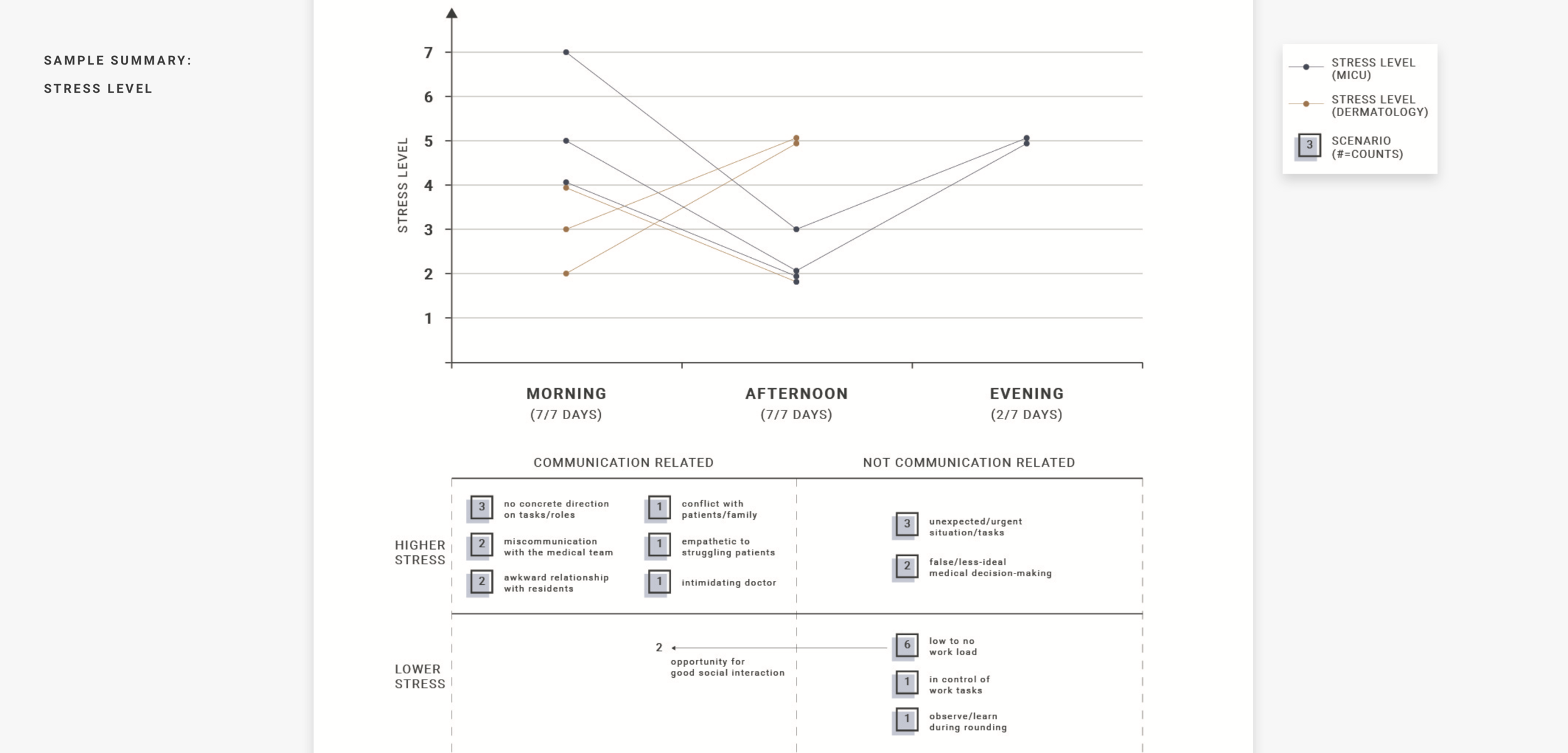

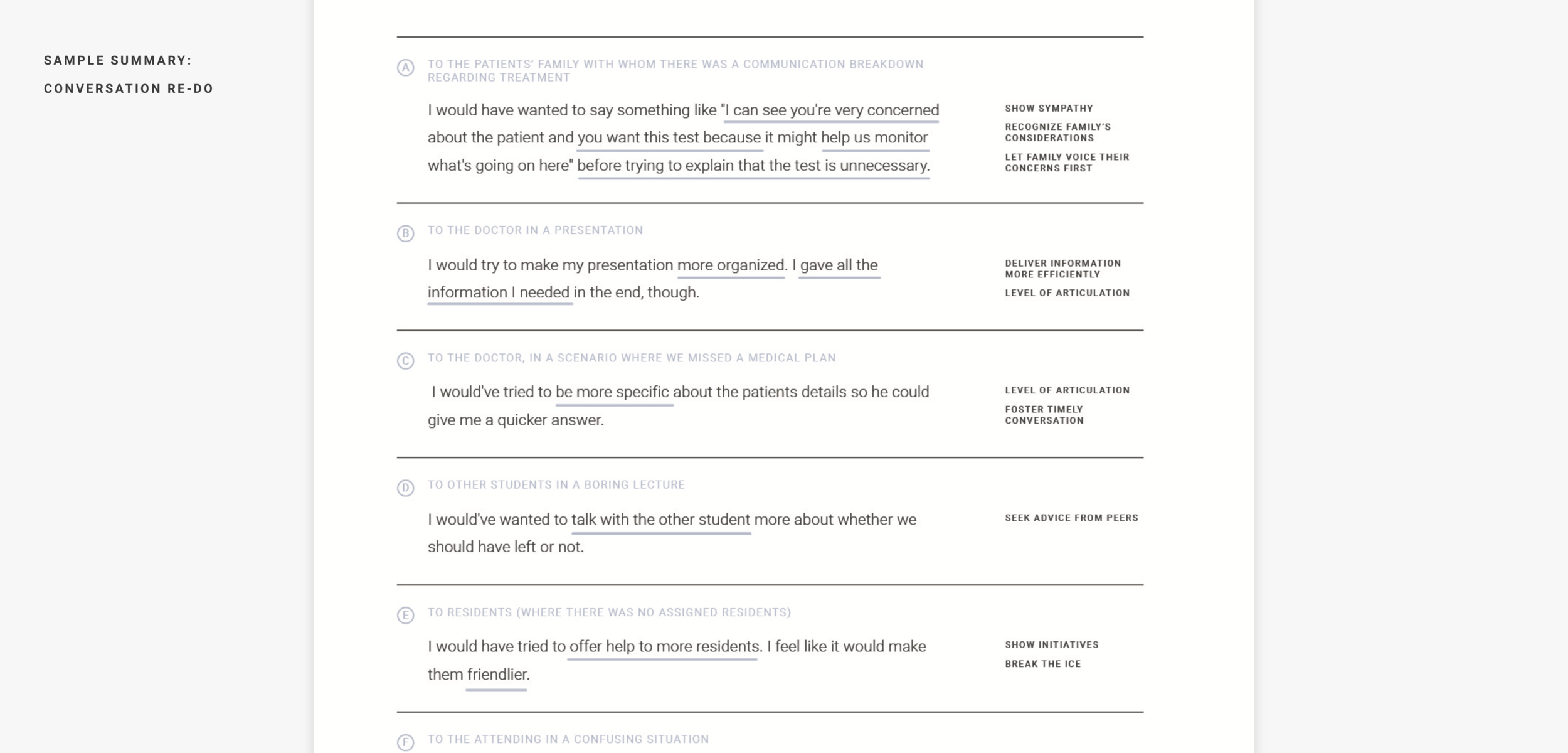

Text-based diary Study

Because most of the rotation students are busy, we have designed this short, daily-based text study to understand their daily interaction with other people in the hospital and the stress level through the day.

What we learned

These two studies help us dive into the details of rotation student's daily life. Some of the insights are:

1. Confirmation:

Students desire clear confirmation of their communication behaviors and corrections

2. Tips for improvement:

Students struggle with identifying concrete elements to improve from failed experiences

3. Practice for rare patients situations:

Practicing for rare patient interactions, such as interacting with low English proficient (LEP) patients or adolescents, are particularly useful because those experiences are difficult to gain in real life.

Personas development

Based on the information gathered from exploratory research, we created two student personae and two corresponding AI personae. As we constructed AI persona, we designed sample speech to reflect learning needs of particular students. Based on the persona study, we started looking further into the needs and challenges to include "existing teachers (doctors and residents)" into the communication learning framework.

04

Focused Generative Stage

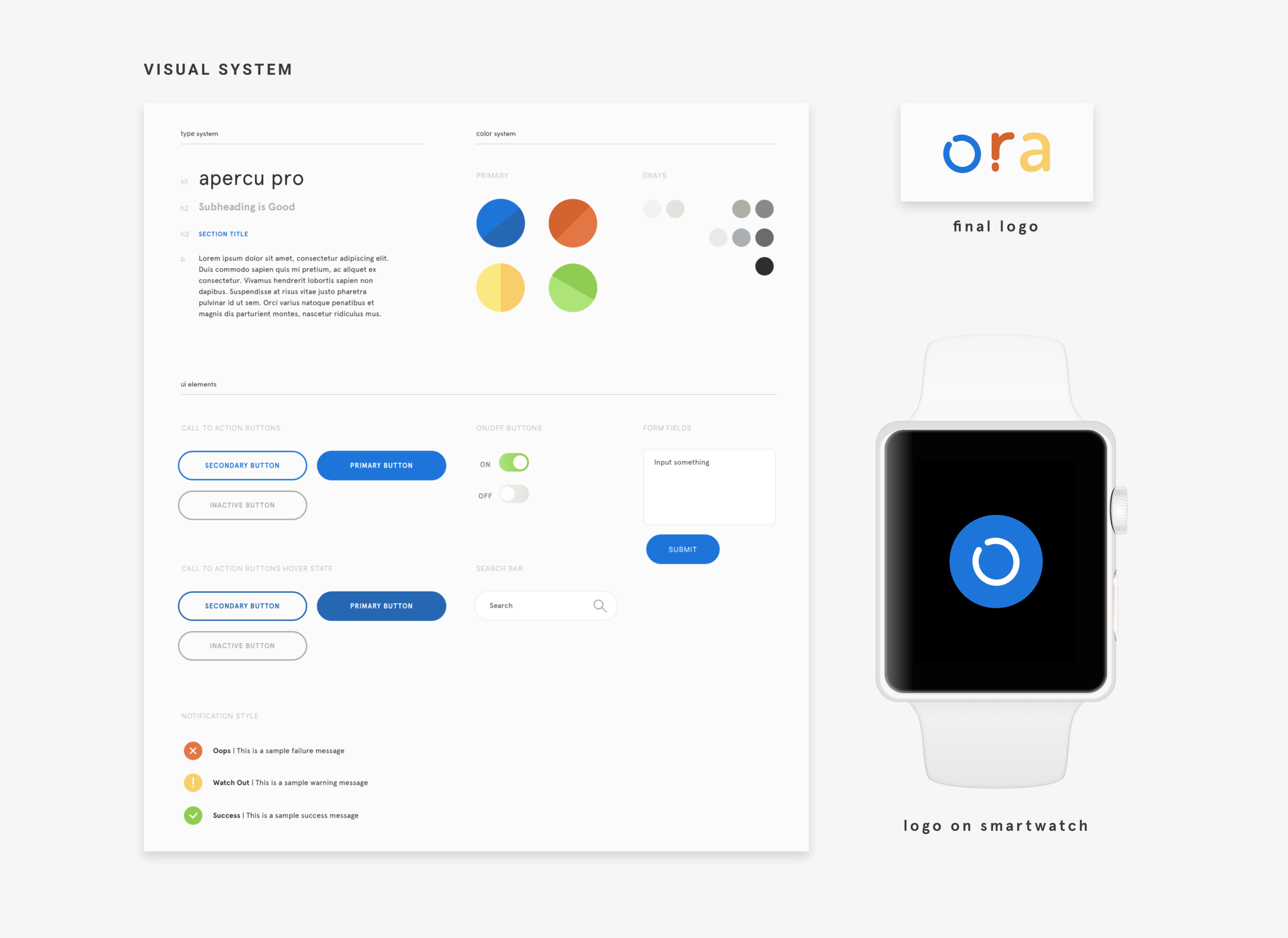

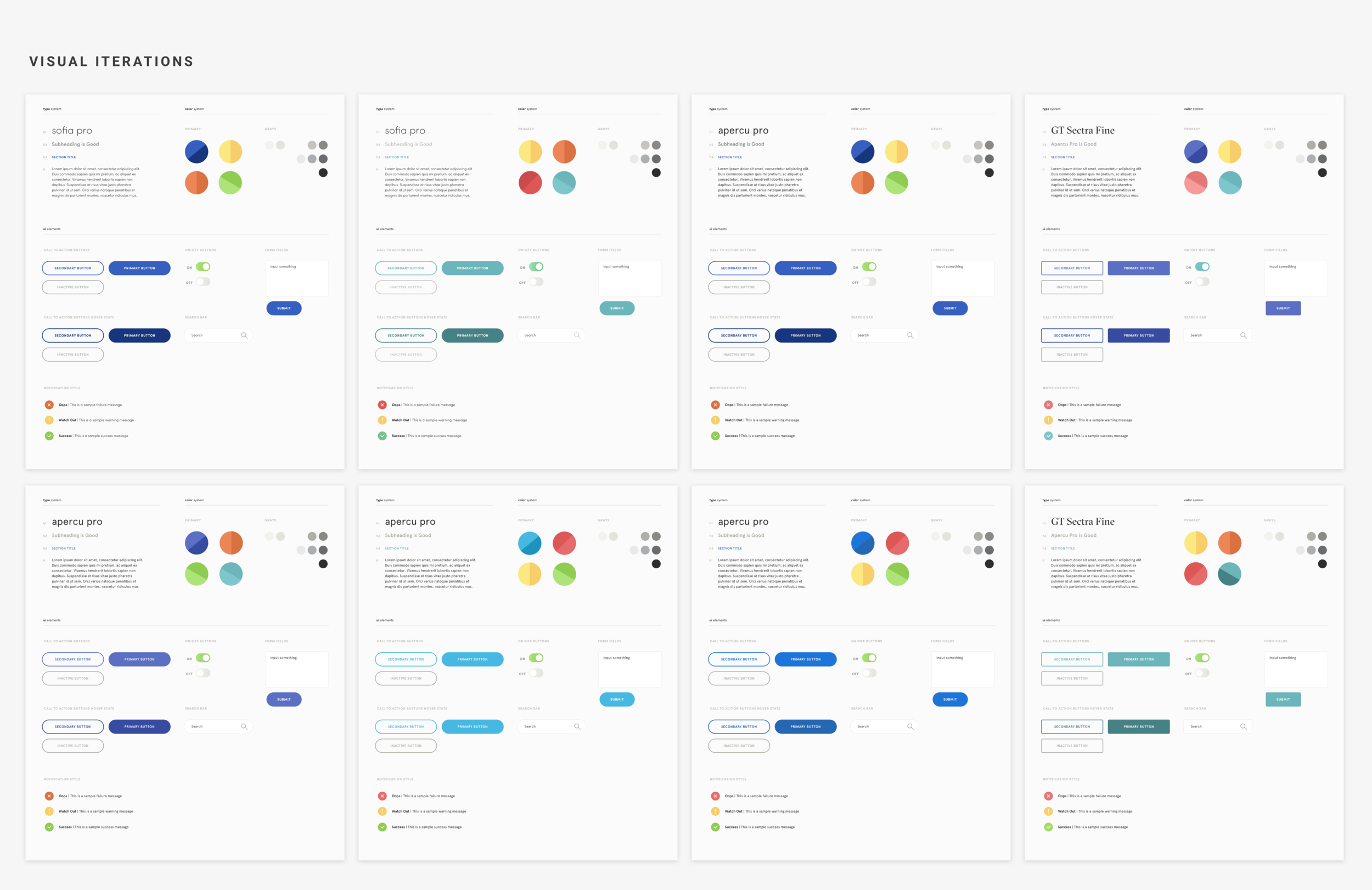

In this stage, we generated many ideas and conduct concept speed dating with storyboards and video. We further iterate our prototype with users’ feedback. We also work on the branding and visual design in this stage.

Storyboard &

Speed-dating

Based on pain points and creative ideas emerged in the generative research, we went through three sets of design iterations involving six paper storyboards and one "video storyboard."

The video storyboard was most useful in helping medical students envision and critique the concept during speed-dating. The feedback became much more concrete and actionable.

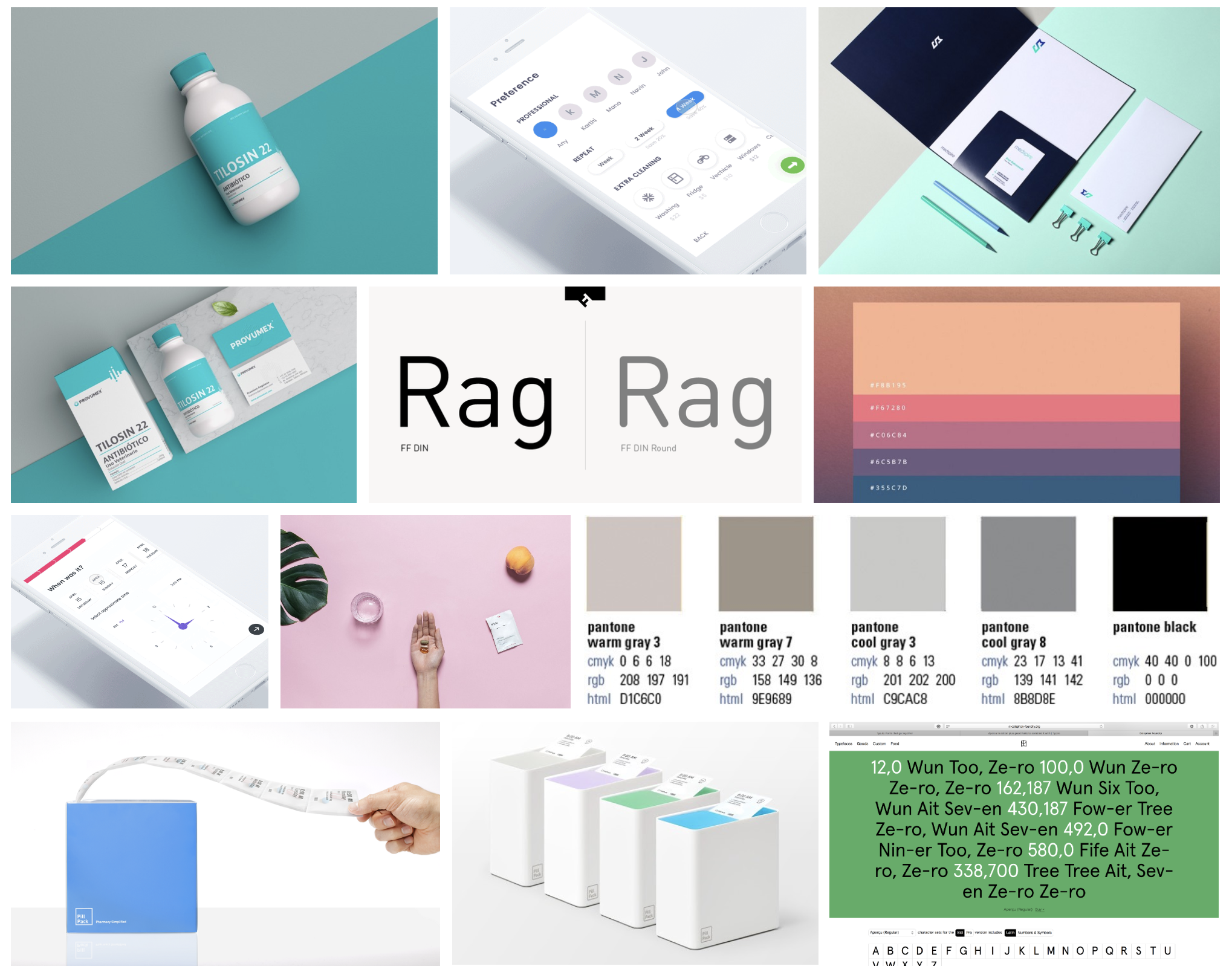

Visual Design & Branding

Before making mocks, we first gathered examples of existing healthcare products that match the AI personality we have defined during personae development phase. AI persona helps us search and gather proper inspirational examples, color scheme, motion behaviors, and typeface choices.

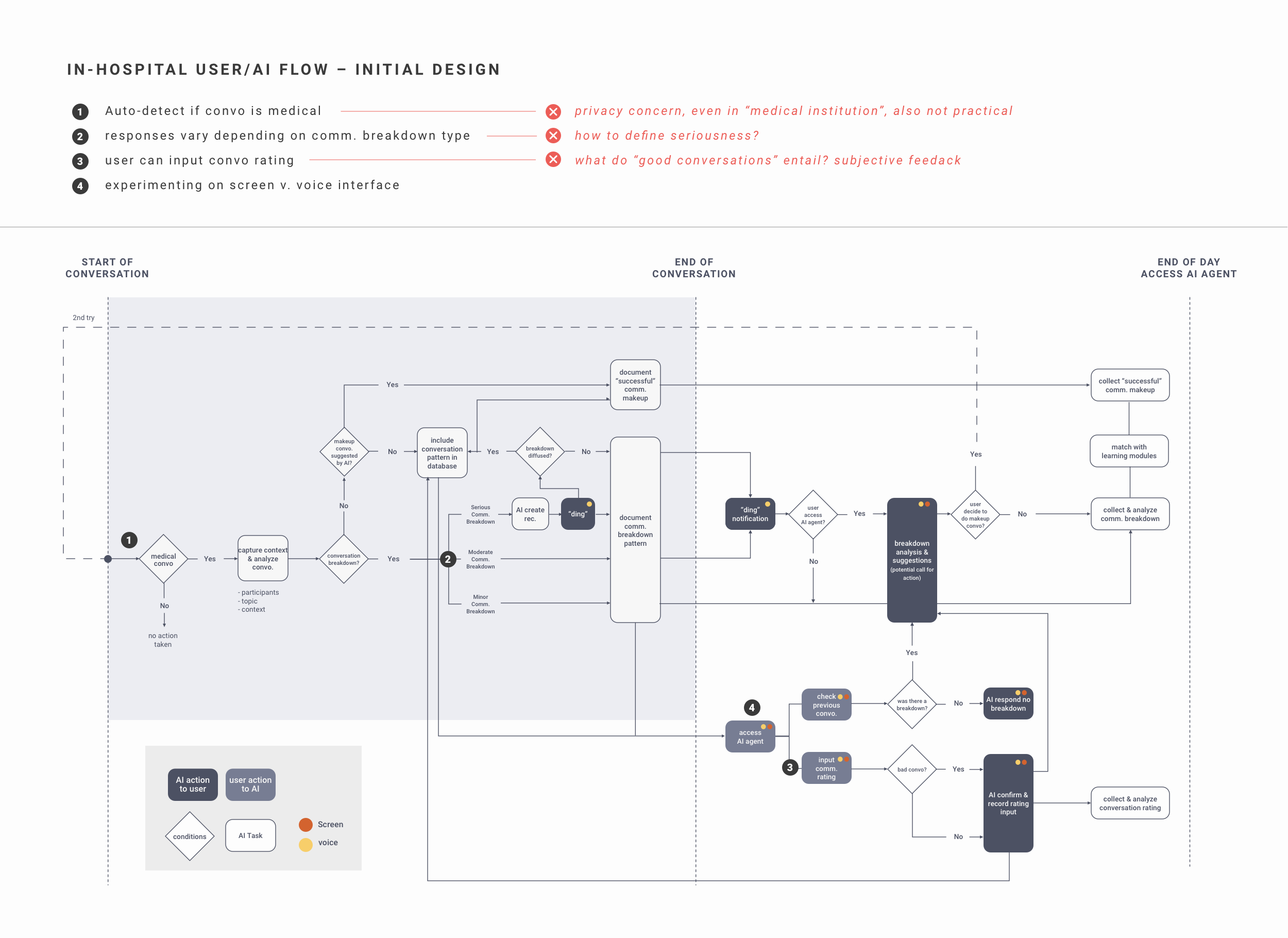

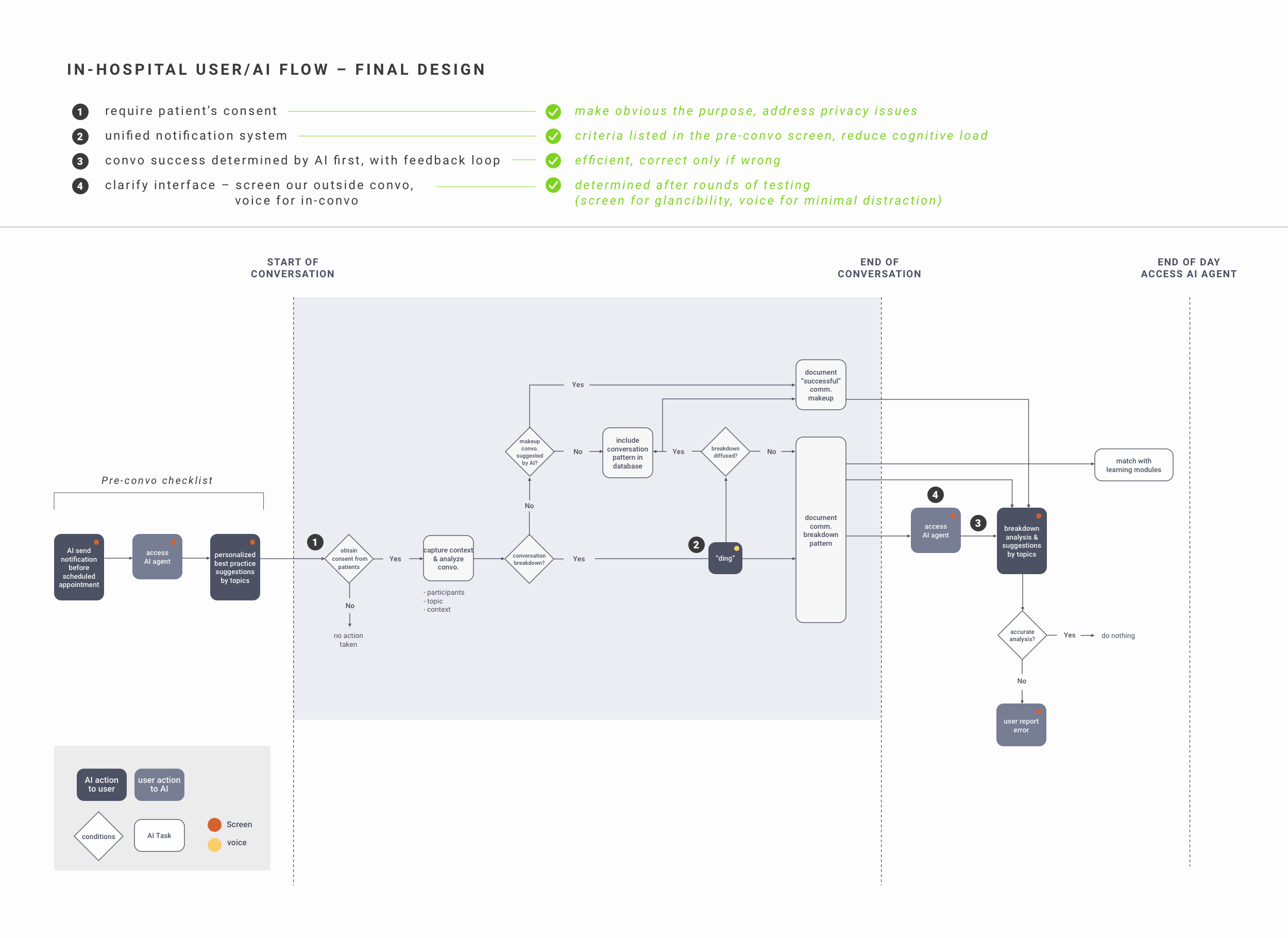

Flow mapping and prototyping

Based on the feedback we’ve received from speed dating, we started to iterate our concept with flow maps. We also began to prototype conversation flow for voice notification, smartwatch screens, at home training app flow, and VR simulations. As many interviewees raised distraction and privacy concerns about AR glasses, we decided to provide notification and checklists with smartwatch and earpiece.

05

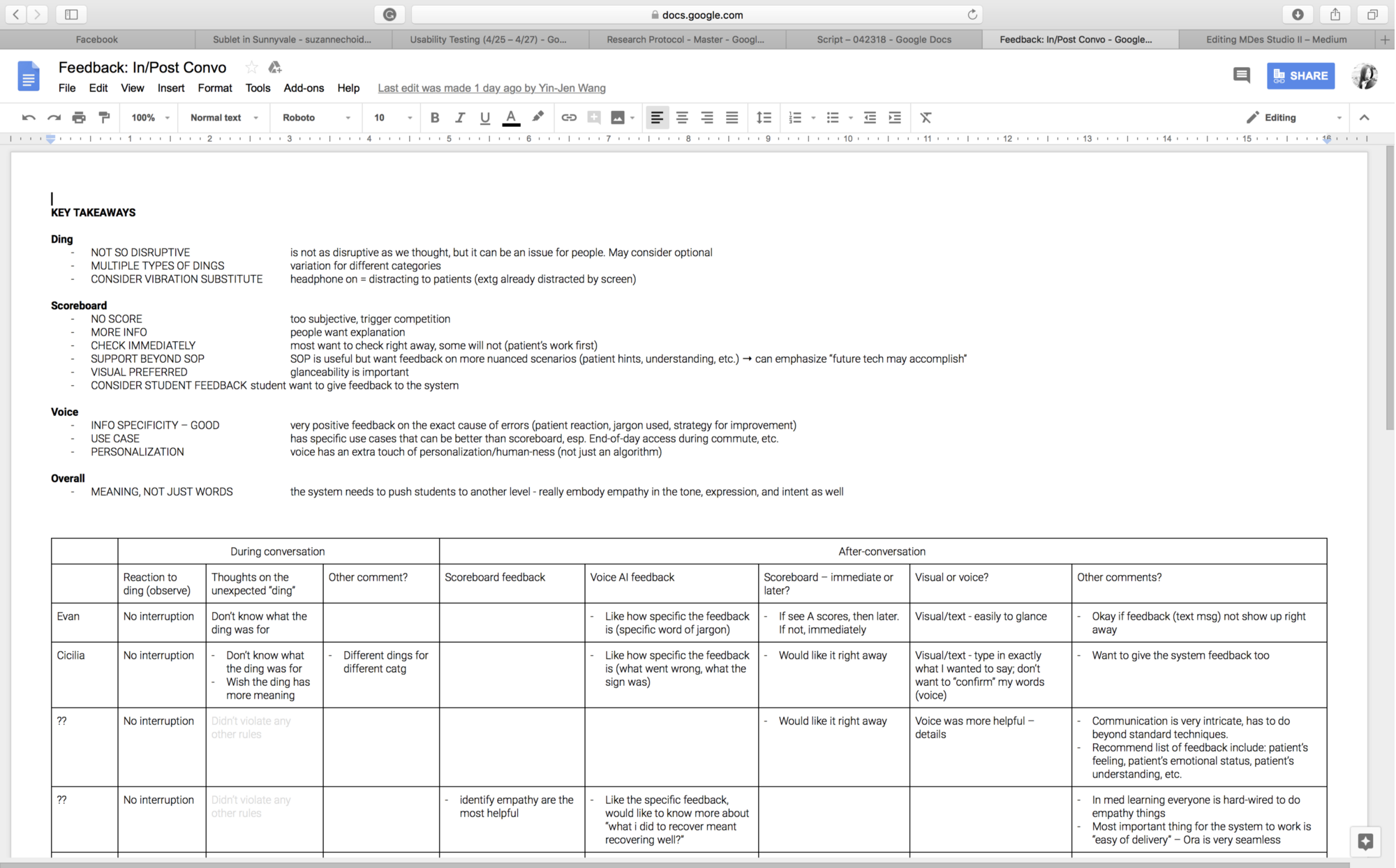

Evaluative Stage

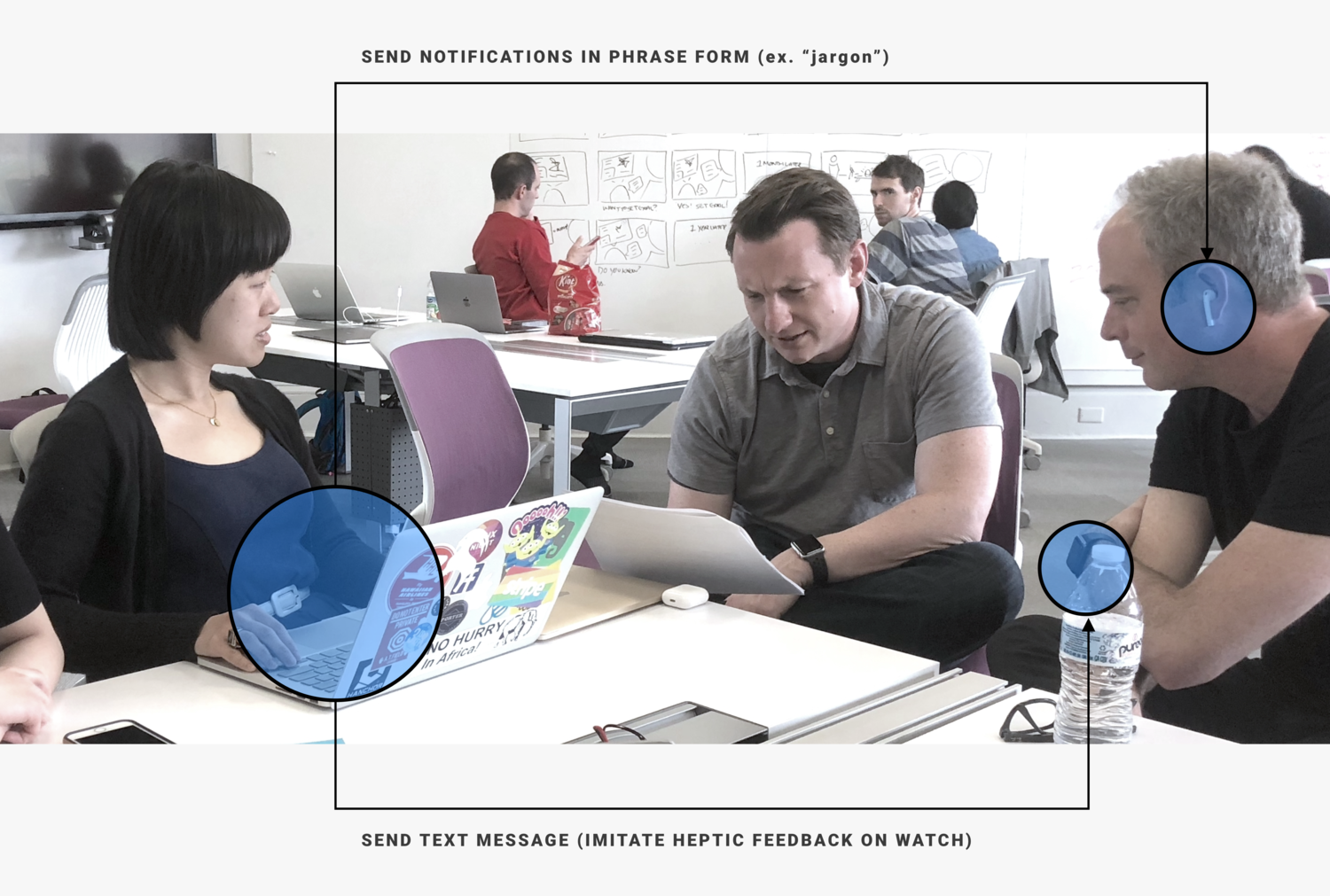

In this stage, we plan and run user test of our final prototype with Wizard of OZ.

Reflection and Next Steps

User Research

As we plan out our researches through the process, the target users, 3rd year medical students, are often busy in their hospital rotation. As a result, we tried best to leverage many different research methods to existing resources.

AI

We spent a lot of time researching AI’s capabilities and limitations. In the medical field, there were a lot of contextual learning challenges that are difficult to to address by AI. Especially AI requires sensitive personal medical data to provide proper support. As a result, what data set could be used to train AI assistance and the patient’s consent process so that our design could function properly.

Design

I was primarily responsible for building the high-fidelity prototypes and test them with our target group. I tried to create many different options with emerging technologies and see how it could fit into the learning context.

Next Steps

For all AI support product, it is hard to evaluate only through Wizard of OZ. The critical next step is to accomplish a minimal viable product which would be building the AI model and the supporting hardware. We also need to consider the social factor of communication training and would like to get feedback from all stakeholders.